Introduction

In the world of Web Scraping Services , data quality is everything. Businesses rely on scraped datasets for insights into pricing, competition, customer behavior, and product trends. However, one of the biggest challenges faced during data collection is incomplete or missing information. This is where the best techniques for dealing with missing values in scraped data become essential. Missing values can occur due to broken HTML elements, inconsistent website structures, API limitations, or access restrictions.

If not handled properly, these gaps can distort analytics, reduce model accuracy, and lead to poor decision-making. On the other hand, applying the right strategies—such as imputation, interpolation, or intelligent data replacement—can transform flawed datasets into reliable assets. This guide explores proven methods to handle missing values effectively, ensuring your scraped data remains accurate, consistent, and ready for advanced analytics and product development.

Building Strong Foundations for Data Pipelines

Handling null values starts at the data pipeline level. A well-designed pipeline ensures that missing values are detected, flagged, and processed efficiently before they impact downstream systems. Techniques such as validation rules, schema checks, and automated alerts help identify inconsistencies early.

From 2020 to 2026, organizations investing in robust pipelines have significantly improved data quality and reliability.

| Year | Companies Using Automated Pipelines (%) | Data Quality Improvement (%) |

|---|---|---|

| 2020 | 38% | 55% |

| 2022 | 50% | 65% |

| 2024 | 63% | 74% |

| 2026 | 78% | 86% |

Modern pipelines integrate preprocessing steps such as null value detection, conditional replacements, and fallback mechanisms. For example, if product prices are missing, systems can pull values from alternative sources or historical datasets. Automation reduces manual intervention while ensuring consistency across large-scale operations. Businesses that prioritize strong pipeline architecture can minimize data loss, improve processing speed, and maintain high-quality datasets for analytics and decision-making.

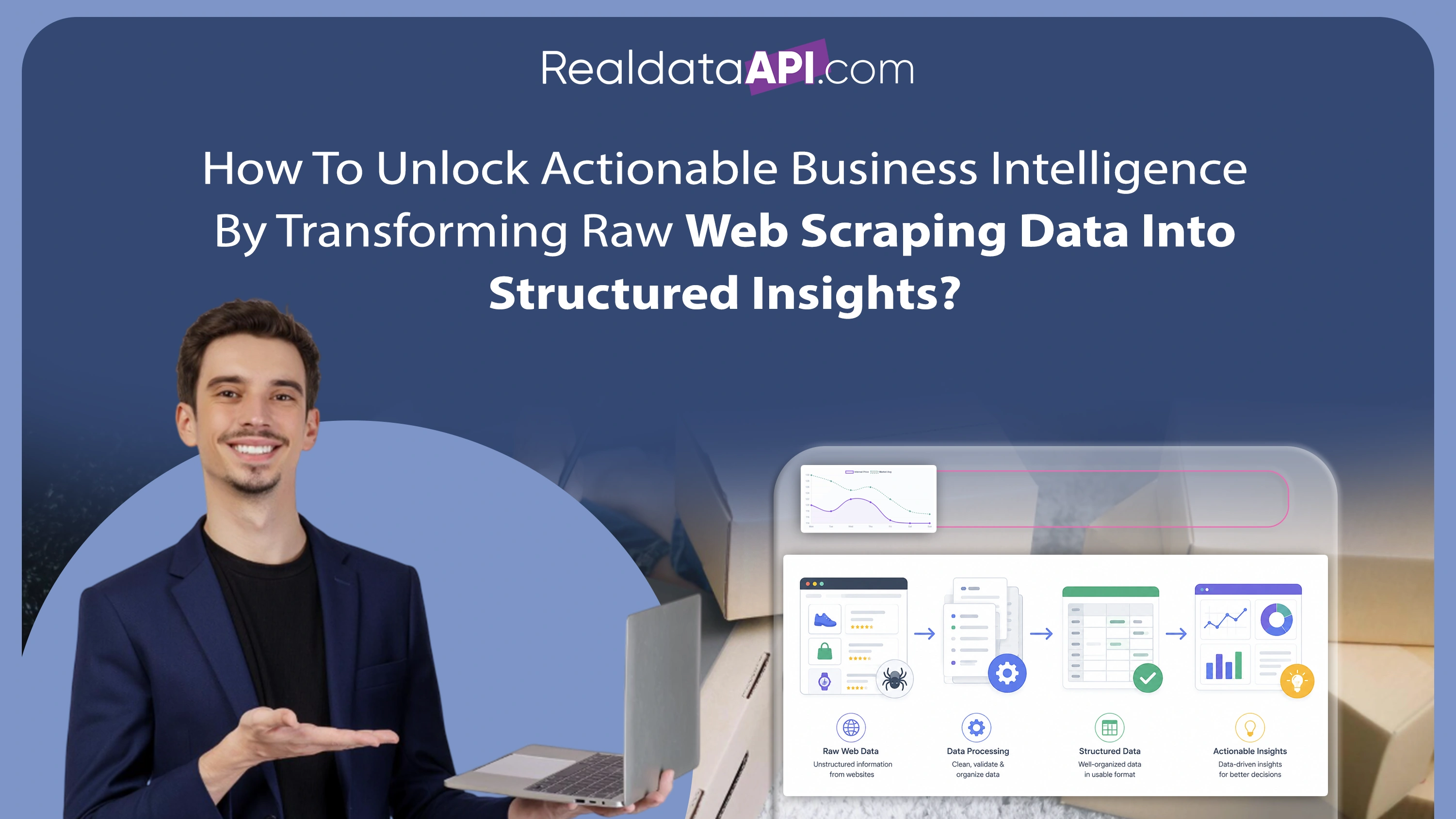

Transforming Raw Data into Usable Insights

Raw scraped data is rarely perfect. cleaning incomplete scraped datasets is a crucial step in ensuring usability and accuracy. This process involves removing duplicates, standardizing formats, and filling in missing values using statistical or machine learning techniques.

Between 2020 and 2026, advancements in data cleaning tools have significantly improved efficiency.

| Year | Data Cleaning Automation (%) | Error Reduction (%) |

|---|---|---|

| 2020 | 35% | 50% |

| 2022 | 47% | 62% |

| 2024 | 60% | 72% |

| 2026 | 75% | 85% |

Common methods include mean/median imputation, forward and backward filling, and predictive modeling. For ecommerce data, missing attributes like product descriptions or ratings can be inferred using similar product data. Data cleaning not only improves dataset quality but also enhances the performance of analytics models. By investing in advanced cleaning techniques, businesses can ensure their data is both accurate and actionable, leading to better insights and improved operational efficiency.

Real-Time Strategies for Dynamic Data Environments

In fast-moving industries like ecommerce, real-time data processing is critical. real-time missing data handling in scraping ensures that datasets remain accurate even as new data is continuously collected. Techniques such as streaming data validation, real-time imputation, and automated fallback systems are widely used.

From 2020 to 2026, real-time data handling has seen rapid adoption.

| Year | Real-Time Processing Adoption (%) | Data Freshness Improvement (%) |

|---|---|---|

| 2020 | 30% | 45% |

| 2022 | 42% | 58% |

| 2024 | 55% | 70% |

| 2026 | 70% | 82% |

For example, if a product's price is missing during scraping, real-time systems can fetch the value from cached data or alternate sources instantly. This ensures minimal disruption to analytics workflows. Real-time handling is especially important for dynamic pricing, inventory tracking, and competitive analysis. Businesses that implement these strategies gain a significant advantage by maintaining up-to-date and reliable datasets.

Ensuring Consistency Across Large-Scale Projects

Large-scale scraping projects often involve multiple data sources, formats, and structures, making consistency a major challenge. managing incomplete data in web scraping projects requires a combination of standardized processes, automated tools, and continuous monitoring.

Between 2020 and 2026, organizations have improved their ability to manage incomplete data effectively.

| Year | Large-Scale Data Handling Efficiency (%) | Consistency Improvement (%) |

|---|---|---|

| 2020 | 40% | 55% |

| 2022 | 52% | 66% |

| 2024 | 65% | 75% |

| 2026 | 79% | 88% |

Techniques such as data normalization, schema alignment, and cross-source validation help ensure consistency. For instance, missing product attributes from one source can be supplemented using data from another platform. Continuous monitoring systems detect anomalies and trigger corrective actions. By implementing these strategies, businesses can maintain high-quality datasets across large-scale operations, enabling accurate analytics and better decision-making.

Supporting Innovation Through Better Data Practices

High-quality data plays a crucial role in Product Development. Missing values can hinder innovation by providing incomplete or misleading insights. By applying effective data handling techniques, businesses can ensure that their datasets support accurate analysis and decision-making.

From 2020 to 2026, the impact of clean data on product development has been significant.

| Year | Data-Driven Product Development (%) | Innovation Success Rate (%) |

|---|---|---|

| 2020 | 45% | 60% |

| 2022 | 58% | 70% |

| 2024 | 70% | 80% |

| 2026 | 82% | 90% |

For example, complete product data enables better market analysis, customer segmentation, and feature optimization. Missing values in critical fields like pricing or specifications can lead to incorrect conclusions. By addressing these gaps, businesses can improve the accuracy of their insights and drive innovation. Clean data ensures that product development strategies are based on reliable information, leading to better outcomes and increased competitiveness.

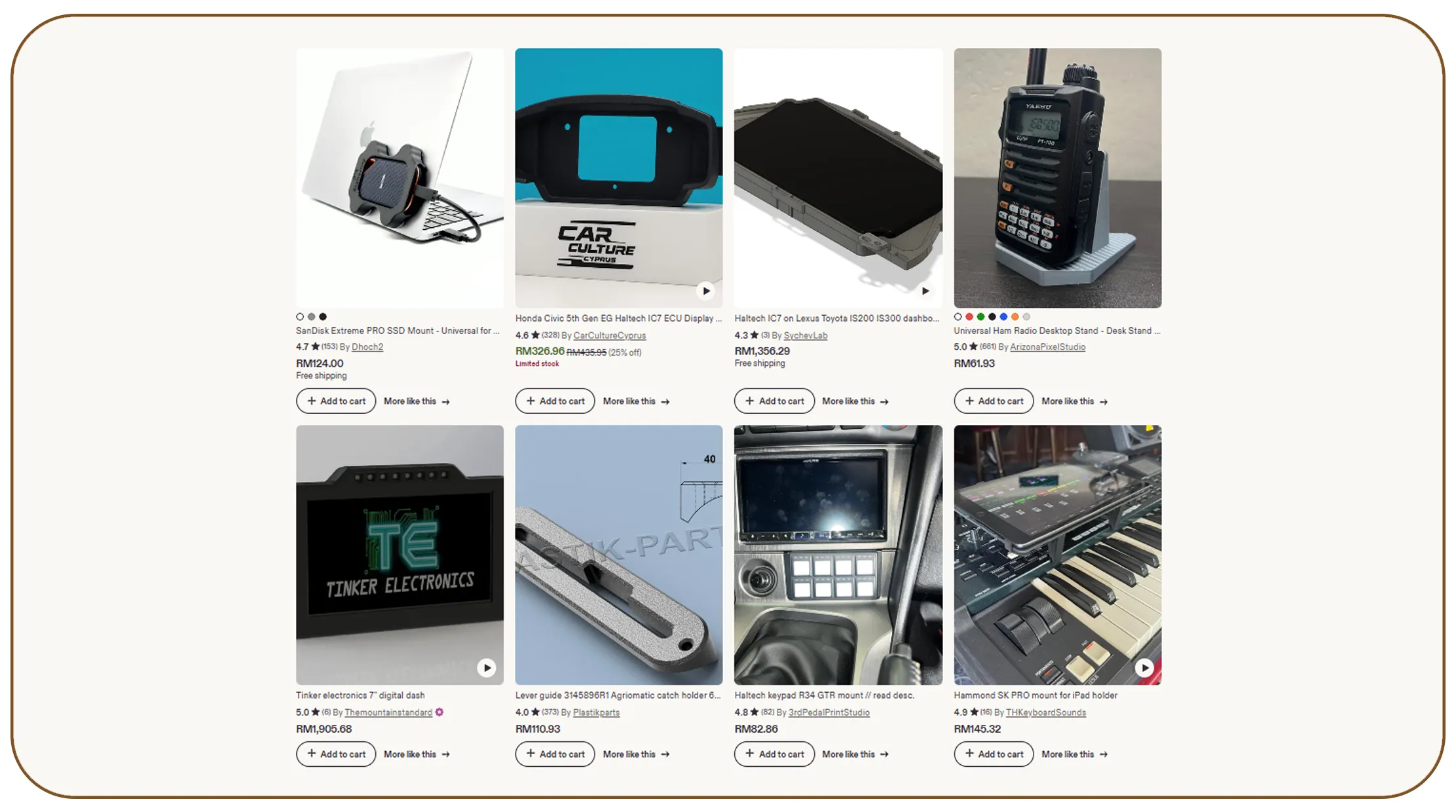

Achieving Accurate Data Alignment Across Platforms

Product Matching relies heavily on complete and accurate data. Missing values can lead to incorrect matches, duplication, or missed opportunities. By addressing these gaps, businesses can improve matching accuracy and ensure consistent product catalogs.

Between 2020 and 2026, improvements in data handling have significantly enhanced product matching performance.

| Year | Matching Accuracy (%) | Duplicate Reduction (%) |

|---|---|---|

| 2020 | 68% | 52% |

| 2022 | 75% | 63% |

| 2024 | 83% | 74% |

| 2026 | 90% | 86% |

Techniques such as attribute enrichment, similarity scoring, and machine learning models help improve matching accuracy. For instance, missing product attributes can be inferred using data from similar items. Accurate product matching is essential for price comparison, inventory management, and customer experience. Businesses that prioritize complete and consistent data can achieve better alignment across platforms, leading to improved operational efficiency and customer satisfaction.

Why Choose Real Data API?

Real Data API provides advanced solutions for handling incomplete and inconsistent datasets through its powerful Web Scraping API. With features like automated data cleaning, real-time processing, and intelligent imputation, businesses can ensure high-quality data for analytics and decision-making. The platform is designed to handle large-scale operations efficiently, making it an ideal choice for companies looking to optimize their data workflows and achieve reliable results.

Conclusion

Handling missing values is a critical aspect of data scraping that directly impacts the quality and reliability of your insights. By applying the best techniques for dealing with missing values in scraped data, businesses can transform incomplete datasets into valuable assets. From pipeline optimization to real-time processing and advanced matching techniques, each strategy plays a vital role in ensuring data accuracy.

As data continues to drive decision-making in ecommerce and beyond, investing in robust data handling practices is essential.

Ready to elevate your data quality and unlock actionable insights? Leverage professional Web Scraping Services with Real Data API today and stay ahead of the competition.