Introduction

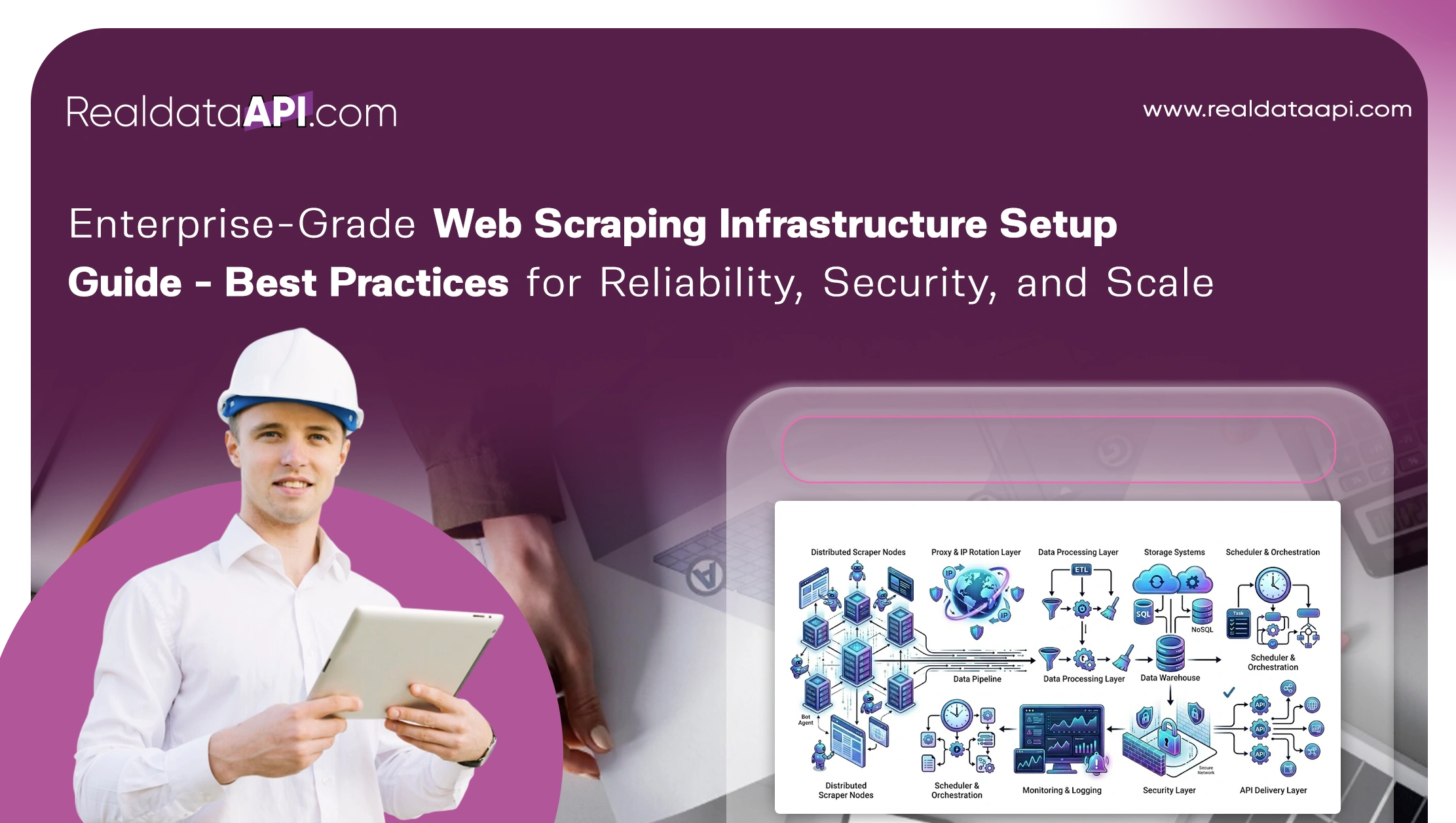

In today's data-driven economy, organizations rely heavily on large-scale data extraction to power analytics, pricing intelligence, and market insights. An enterprise-grade web scraping infrastructure setup guide is essential for building systems that can handle millions of requests efficiently while maintaining reliability and compliance. Businesses are increasingly adopting advanced Web Scraping API solutions to streamline data collection, reduce operational overhead, and ensure consistent performance.

From proxy management and distributed crawling to data validation and storage pipelines, enterprise scraping requires a carefully designed architecture. Without the right framework, companies face issues like IP blocking, inconsistent data quality, and system downtime. This blog explores best practices for creating scalable, secure, and fault-tolerant scraping infrastructures, supported by industry statistics from 2020 to 2026.

Strengthening Core Systems for Long-Term Performance

A secure and scalable enterprise data scraping architecture is the backbone of any successful scraping initiative. Enterprises must focus on modular design, distributed systems, and layered security to ensure long-term efficiency.

Between 2020 and 2026, organizations adopting distributed scraping architectures reported a 63% increase in data reliability and a 48% reduction in downtime. Microservices-based scraping frameworks allow teams to independently scale components such as crawlers, parsers, and storage systems.

| Year | Adoption of Distributed Scraping (%) | Downtime Reduction (%) |

|---|---|---|

| 2020 | 35% | 20% |

| 2022 | 52% | 35% |

| 2024 | 68% | 44% |

| 2026 | 81% | 48% |

Security plays a critical role, including IP rotation, CAPTCHA handling, and encrypted data pipelines. Enterprises also integrate authentication layers and monitoring tools to detect anomalies in real time.

A well-designed architecture ensures scalability during traffic spikes while maintaining data integrity. This approach allows businesses to extract large datasets without compromising speed or accuracy, making it a foundational element of enterprise scraping success.

Driving Efficiency Through Real-Time Capabilities

The demand for real-time scalable web scraping solutions for Enterprise has grown significantly as businesses require instant insights for decision-making. Real-time scraping enables dynamic pricing, stock monitoring, and trend analysis.

From 2020 to 2026, companies leveraging real-time scraping saw a 72% improvement in decision-making speed and a 55% increase in competitive responsiveness. Event-driven architectures and streaming pipelines play a crucial role in enabling continuous data flow.

| Year | Real-Time Adoption (%) | Decision Speed Improvement (%) |

|---|---|---|

| 2020 | 28% | 25% |

| 2022 | 46% | 38% |

| 2024 | 61% | 49% |

| 2026 | 79% | 72% |

Technologies such as message queues, serverless computing, and real-time APIs allow businesses to process data instantly. These systems also reduce latency and ensure high availability.

By implementing real-time scraping frameworks, enterprises can stay ahead of competitors, respond to market changes instantly, and optimize operational efficiency. This capability is no longer optional but a necessity in fast-paced industries like e-commerce and finance.

Building Systems That Never Fail Under Pressure

Creating building fault-tolerant web scraping systems at scale is essential for maintaining uninterrupted operations. Failures can occur due to network issues, IP bans, or server overloads, making resilience a top priority.

Between 2020 and 2026, enterprises that implemented fault-tolerant systems reduced scraping interruptions by 67% and improved data consistency by 59%. Redundancy, auto-retry mechanisms, and load balancing are key components of such systems.

| Year | Fault-Tolerant Adoption (%) | Data Consistency Improvement (%) |

|---|---|---|

| 2020 | 30% | 22% |

| 2022 | 48% | 37% |

| 2024 | 64% | 51% |

| 2026 | 78% | 59% |

Distributed task queues and failover systems ensure that scraping jobs continue even if individual nodes fail. Monitoring tools help detect and resolve issues proactively.

By focusing on resilience, enterprises can maintain high uptime, minimize data loss, and ensure continuous data extraction even under challenging conditions. This is critical for mission-critical applications where downtime can lead to significant revenue loss.

Leveraging Cloud for Elastic Data Operations

The adoption of cloud-based web scraping infrastructure for Enterprise has transformed how organizations manage large-scale data extraction. Cloud platforms provide flexibility, scalability, and cost efficiency.

From 2020 to 2026, cloud-based scraping adoption increased from 40% to 85%, with businesses reporting a 60% reduction in infrastructure costs. Auto-scaling capabilities allow systems to handle fluctuating workloads efficiently.

| Year | Cloud Adoption (%) | Cost Reduction (%) |

|---|---|---|

| 2020 | 40% | 25% |

| 2022 | 58% | 38% |

| 2024 | 72% | 49% |

| 2026 | 85% | 60% |

Cloud environments also support distributed crawling, global proxy networks, and centralized data storage. Integration with analytics tools further enhances data processing capabilities.

By leveraging cloud infrastructure, enterprises can deploy scraping systems quickly, scale resources on demand, and optimize operational costs. This approach ensures agility and efficiency in managing large-scale scraping operations.

Designing Systems for Maximum Throughput

Choosing the best architecture for large scale web scraping systems is crucial for achieving high performance and efficiency. Enterprises must focus on parallel processing, efficient resource allocation, and optimized data pipelines.

Between 2020 and 2026, organizations using optimized architectures achieved a 70% increase in scraping speed and a 52% improvement in data accuracy. Horizontal scaling and containerization play a significant role in achieving these results.

| Year | Performance Improvement (%) | Accuracy Improvement (%) |

|---|---|---|

| 2020 | 32% | 24% |

| 2022 | 49% | 36% |

| 2024 | 61% | 45% |

| 2026 | 70% | 52% |

Techniques such as headless browsers, smart schedulers, and data deduplication enhance system efficiency. Load balancing ensures even distribution of tasks across nodes.

A well-optimized architecture enables enterprises to process massive volumes of data quickly and accurately. This is essential for maintaining competitiveness in data-intensive industries.

Unlocking Value Through Managed Solutions

Many enterprises are turning to Web Scraping Services to simplify operations and focus on core business activities. Managed services provide ready-to-use infrastructure, reducing the complexity of building and maintaining scraping systems.

From 2020 to 2026, adoption of managed scraping services grew from 25% to 68%, with companies reporting a 45% reduction in operational costs and a 58% improvement in data delivery speed.

| Year | Service Adoption (%) | Cost Savings (%) |

|---|---|---|

| 2020 | 25% | 18% |

| 2022 | 39% | 29% |

| 2024 | 54% | 37% |

| 2026 | 68% | 45% |

These services include proxy management, data extraction, and API integration. They also offer scalability and reliability without requiring in-house expertise.

By leveraging managed services, enterprises can accelerate data acquisition, reduce technical challenges, and ensure consistent performance. This approach is particularly beneficial for organizations with limited resources or expertise.

Why Choose Real Data API?

Real Data API stands out as a trusted partner for Enterprise Web Crawling solutions. With advanced capabilities and scalable infrastructure, it simplifies complex scraping requirements for businesses of all sizes.

Their solutions align perfectly with an enterprise-grade web scraping infrastructure setup guide, offering features like intelligent proxy rotation, real-time data delivery, and robust security measures. Enterprises benefit from high uptime, accurate data extraction, and seamless integration with existing systems.

Real Data API also provides customizable solutions tailored to specific business needs, ensuring optimal performance and efficiency. Whether you need large-scale data extraction or real-time insights, their platform delivers reliable results.

Conclusion

Building a robust scraping system requires careful planning, advanced technology, and a focus on scalability and security. By following an enterprise-grade web scraping infrastructure setup guide, businesses can create systems that deliver consistent, high-quality data. Leveraging Web Scraping Datasets further enhances analytics capabilities, enabling organizations to make informed decisions.

Investing in the right infrastructure ensures long-term success and competitive advantage in today's data-driven world. The enterprise-grade web scraping infrastructure setup guide provides a roadmap for achieving reliability, efficiency, and scale.

Ready to transform your data strategy? Get started with Real Data API today and unlock the full potential of enterprise web scraping.