Introduction

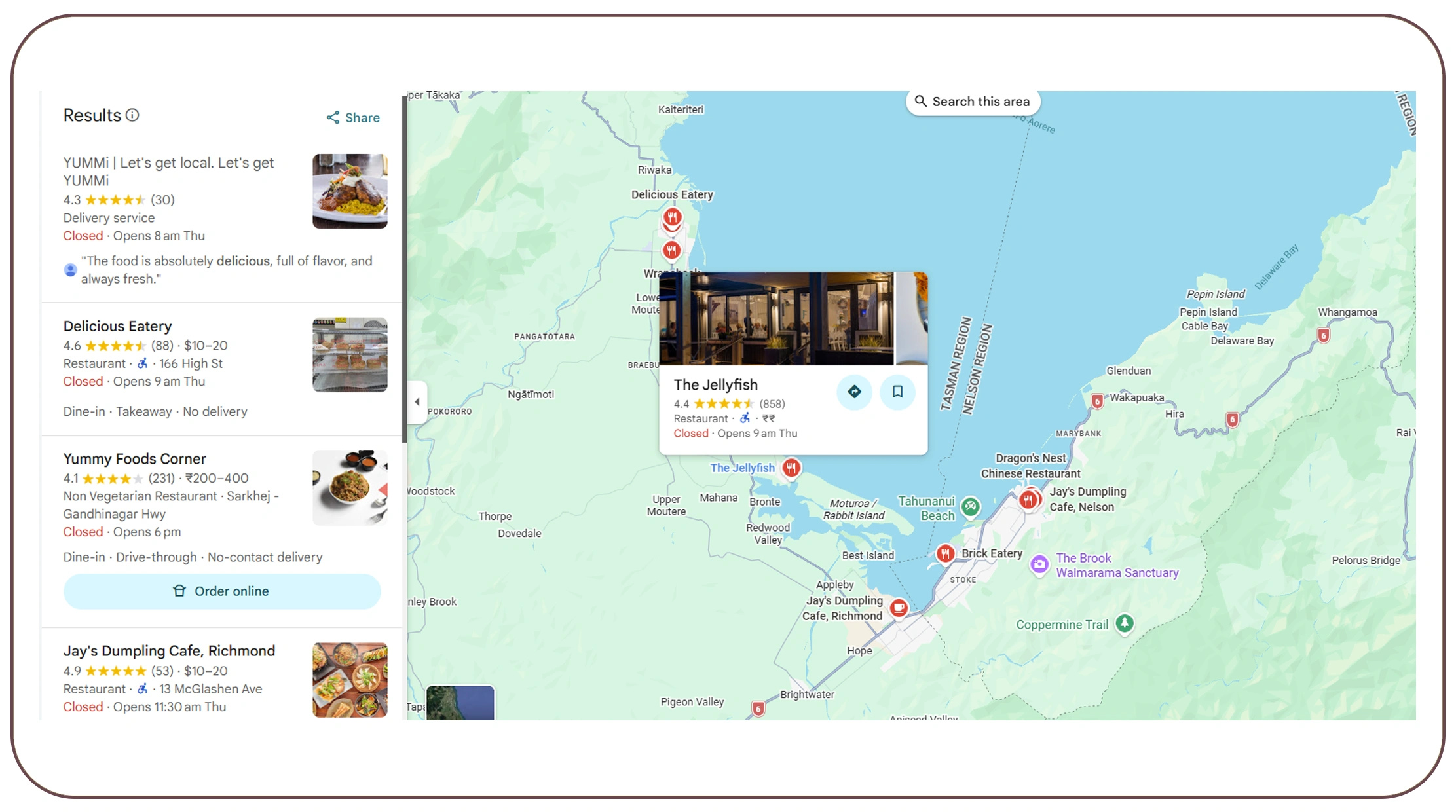

The food delivery industry in New Zealand is expanding rapidly, with platforms like www.yummi.co.nz connecting customers to thousands of restaurants across multiple cities. As competition increases, businesses need structured, real-time data to make informed decisions about pricing, promotions, market positioning, and operational efficiency.

This is where advanced web scraping becomes critical.

In this 5-part series, we explore how to build a powerful food delivery intelligence system by extracting structured data from YUMMi NZ. In Part 1, we focus on building the core dataset — the foundation that powers pricing analytics, discount tracking, geo-level insights, and competitive benchmarking.

For businesses leveraging solutions like Real Data API, building a structured and scalable Web Datasets is the first step toward transforming raw web data into actionable food delivery intelligence.

Why Scrape YUMMi NZ for Food Delivery Analytics?

Food delivery platforms contain valuable structured signals such as:

- Restaurant listings by city

- Menu categories and item-level pricing

- Cuisine distribution

- Ratings and review counts

- Delivery fees and minimum order thresholds

- Estimated delivery times

When systematically extracted and structured, this data can power:

- Market expansion strategies

- Real-time price monitoring

- Competitive intelligence dashboards

- Promotional campaign analysis

- City-wise demand forecasting

A scalable infrastructure such as Real Data API enables businesses to automate this data extraction and convert it into clean, analytics-ready datasets.

But before advanced analytics, we need a strong foundation.

Understanding YUMMi's Data Architecture

Food delivery platforms typically operate across multiple layers of structured data.

- Location Layer

- City

- Suburb

- Postal code

- Delivery radius

- Restaurant Layer

- Restaurant name

- Cuisine type

- Ratings & review count

- Estimated delivery time

- Delivery fee

- Minimum order value

- Menu Layer

- Category (Starters, Mains, etc.)

- Item name

- Description

- Base price

- Add-on/customization pricing

- Metadata Layer

- Promotional offers

- Service fees

- Availability status

- Operating hours

In Part 1, we focus on extracting Layers 1–3 to build a robust core dataset schema.

Step 1: Designing the Core Dataset Schema

Scraping without structure leads to messy datasets. Before extracting data, define a normalized schema.

Restaurant Dataset Structure

| Field | Description |

|---|---|

| restaurant_id | Unique ID |

| name | Restaurant name |

| city | Location |

| suburb | Sub-location |

| cuisine | Primary cuisine |

| rating | Average rating |

| review_count | Number of reviews |

| delivery_time | Estimated delivery |

| delivery_fee | Delivery charge |

| minimum_order | Minimum order value |

Menu Dataset Structure

| Field | Description |

|---|---|

| item_id | Unique ID |

| restaurant_id | Linked restaurant |

| category | Menu category |

| item_name | Dish name |

| description | Item description |

| base_price | Price |

| availability | Stock status |

This structure allows seamless integration into business intelligence tools and data warehouses. Many enterprise teams use Real Data API pipelines to automate schema validation, cleaning, and storage into analytics systems.

Step 2: Location-Based Crawling Strategy

Food delivery platforms personalize listings based on user location via Food Data Scraping API.

To build a nationwide dataset, you must:

- Simulate multiple NZ cities (Auckland, Wellington, Christchurch, Hamilton)

- Trigger dynamic restaurant listings per suburb

- Capture pagination and infinite scroll data

- Map geo-coordinates if available

Location-based scraping enables:

- City-wise cuisine density analysis

- Regional delivery fee modeling

- Localized price benchmarking

- Expansion opportunity detection

A structured geo-crawling system — like those built within Real Data API frameworks — ensures complete market coverage instead of fragmented suburb-level data.

Step 3: Extracting Restaurant-Level Intelligence

Restaurant-level signals are the backbone of food delivery analytics.

Key elements to extract:

- Restaurant name

- Cuisine tags

- Ratings & reviews

- Delivery time estimate

- Delivery fee

- Minimum order requirement

This data helps answer strategic questions:

- Which cuisine dominates Auckland?

- Do higher-rated restaurants charge higher delivery fees?

- Which suburbs have premium pricing clusters?

Restaurant-level datasets create segmentation models such as:

- Premium vs budget restaurants

- Fast-delivery clusters

- High-review density competitors

This layer supports competitive intelligence engines and benchmarking dashboards.

Step 4: Menu-Level Data Extraction

Menu data unlocks deeper pricing and demand insights.

Each restaurant includes:

- Multiple categories

- Dozens of items

- Variable pricing structures

- Customization add-ons

Extracting structured menu data enables:

- Price comparison across competitors

- Cuisine-level price range analysis

- Inflation trend monitoring

- Category performance analysis

- Value meal benchmarking

For example:

- What is the average pizza price in Wellington?

- How do burger prices differ between Auckland and Christchurch?

- Which cuisine shows the highest price volatility?

Real Data API systems typically structure this menu-level data into relational models, allowing seamless integration with analytics dashboards.

Handling Dynamic Rendering & API Calls

Modern delivery platforms rely heavily on:

- JavaScript rendering

- Dynamic pricing updates

- Session-based location detection

- Lazy loading content

To build a reliable scraper:

- Use headless browsing frameworks

- Monitor network requests

- Identify publicly accessible API endpoints

- Implement rate-limiting

- Rotate IPs if necessary

- Handle cookie/session management

Scalability matters.

For enterprise monitoring, Real Data API infrastructures support:

- Distributed crawling

- Incremental data refresh

- Daily or hourly updates

- Automated error detection

Without automation, manual scraping quickly becomes unsustainable.

Data Cleaning & Normalization

Raw scraped data is rarely ready for analytics.

Cleaning steps include:

- Removing currency symbols

- Converting delivery times into numeric ranges

- Standardizing cuisine categories

- Deduplicating restaurants

- Handling missing values

- Normalizing suburb naming

For example:

- "$5 Delivery" → 5

- "25–40 mins" → 32.5 (average)

Clean, normalized data ensures:

- Accurate dashboards

- Reliable price comparisons

- Valid forecasting models

- Error-free benchmarking

Real Data API pipelines typically include automated validation rules to maintain dataset consistency across refresh cycles.

Structuring Data for Analytics Infrastructure

Once cleaned, store data in:

- SQL databases

- Cloud warehouses

- Data lakes

- Analytics platforms

Important features to enable:

- Historical price tracking

- Snapshot comparisons

- Change detection

- Incremental crawling

- Audit logging

Historical data enables:

- Inflation monitoring

- Seasonal pricing shifts

- Promotional impact analysis

- Delivery fee trend mapping

This historical capability becomes critical in Part 2 when we analyze discount and promotional campaign intelligence.

Business Use Cases of the Core Dataset

With a structured Food Dataset in place, businesses can unlock:

- Market Intelligence

- Cuisine distribution by city

- Delivery fee averages by suburb

- Restaurant density mapping

- Competitive Benchmarking

- Price comparison models

- Rating vs pricing correlation

- Minimum order threshold analysis

- Expansion Strategy

- Underserved locations

- High-demand cuisine gaps

- Competitive saturation levels

- Investment & Strategy Planning

- Market maturity signals

- Premium positioning indicators

- Revenue opportunity mapping

This foundational dataset powers every advanced module in the remaining parts of this series.

Building a Scalable Scraping Infrastructure

Enterprise Web Crawling requires:

- Proxy management

- Distributed architecture

- Modular extraction scripts

- Monitoring & alerting

- Auto-retry logic

- Structure-change detection

Without automation, maintaining data accuracy becomes difficult.

A managed solution like Real Data API can streamline:

- End-to-end scraping workflows

- Data structuring

- Cloud storage integration

- API-based data delivery

- Real-time analytics feed

This allows businesses to focus on insights rather than infrastructure maintenance.

Ethical & Responsible Scraping

When extracting publicly available business data:

- Respect request limits

- Avoid server overload

- Follow compliance guidelines

- Use data for legitimate analytical purposes

Responsible scraping ensures sustainability and long-term monitoring capability.

Conclusion

Building a core dataset from YUMMi NZ is not just a technical exercise — it is the foundation of food delivery intelligence in New Zealand.

By structuring:

- Restaurant-level data

- Menu-level pricing

- Location-based segmentation

- Delivery fee modeling

Businesses can unlock strategic insights that drive pricing optimization, expansion planning, and competitive benchmarking.

However, sustainable monitoring requires scalable infrastructure, automated cleaning pipelines, historical tracking, and structured API-based delivery systems.

This is where Real Data API plays a critical role.

Real Data API enables businesses to:

- Automate large-scale web scraping

- Maintain clean and structured datasets

- Monitor food delivery markets in real time

- Power dashboards and BI tools

- Detect price, menu, and delivery fee changes

- Scale data extraction across multiple cities seamlessly

In today's competitive food delivery landscape, data-driven decision-making separates market leaders from reactive players.

Part 1 lays the foundation.

In Part 2, we will transform this structured dataset into a powerful discount tracking and promotional campaign intelligence engine — unlocking deeper competitive advantages.