Introduction

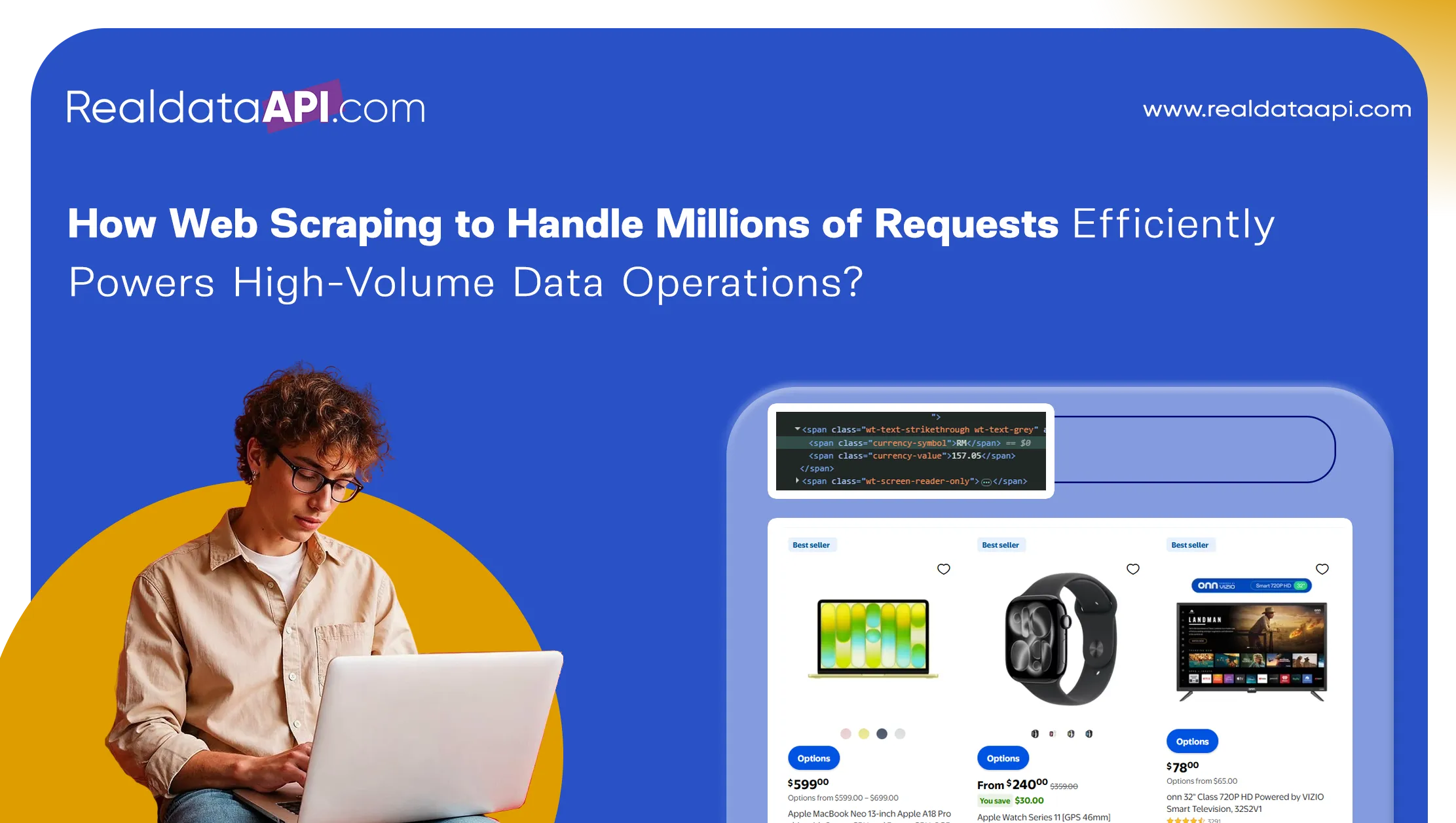

In the era of big data, Web Scraping to Handle Millions of Requests Efficiently has become a cornerstone for businesses aiming to scale their data operations. Organizations today rely on massive volumes of web data to fuel analytics, pricing strategies, and market intelligence. However, managing such high-frequency requests requires advanced infrastructure, smart scheduling, and automation. This is where Robotic Process Automation plays a crucial role, enabling seamless workflows and reducing manual intervention.

Between 2020 and 2026, global data consumption has increased by over 150%, with enterprises processing millions of requests daily. Without efficient scraping strategies, businesses face issues like IP blocking, latency, and inconsistent data delivery. High-volume scraping is no longer just about extraction—it's about reliability, scalability, and performance optimization.

This blog explores how organizations can efficiently manage large-scale scraping operations, the technologies that power them, and the best practices to ensure consistent, high-quality data output for decision-making.

Scaling Extraction Without Breaking Systems

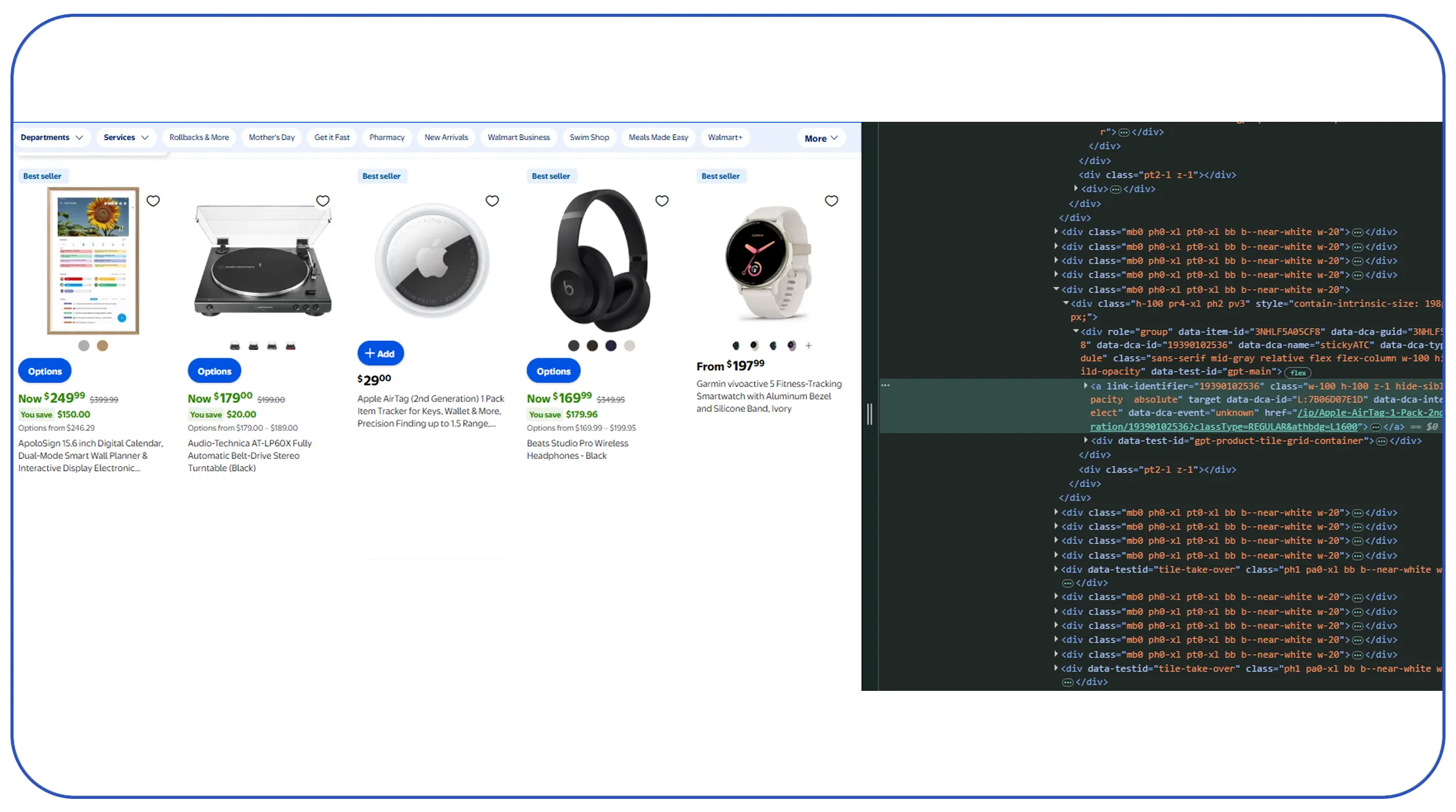

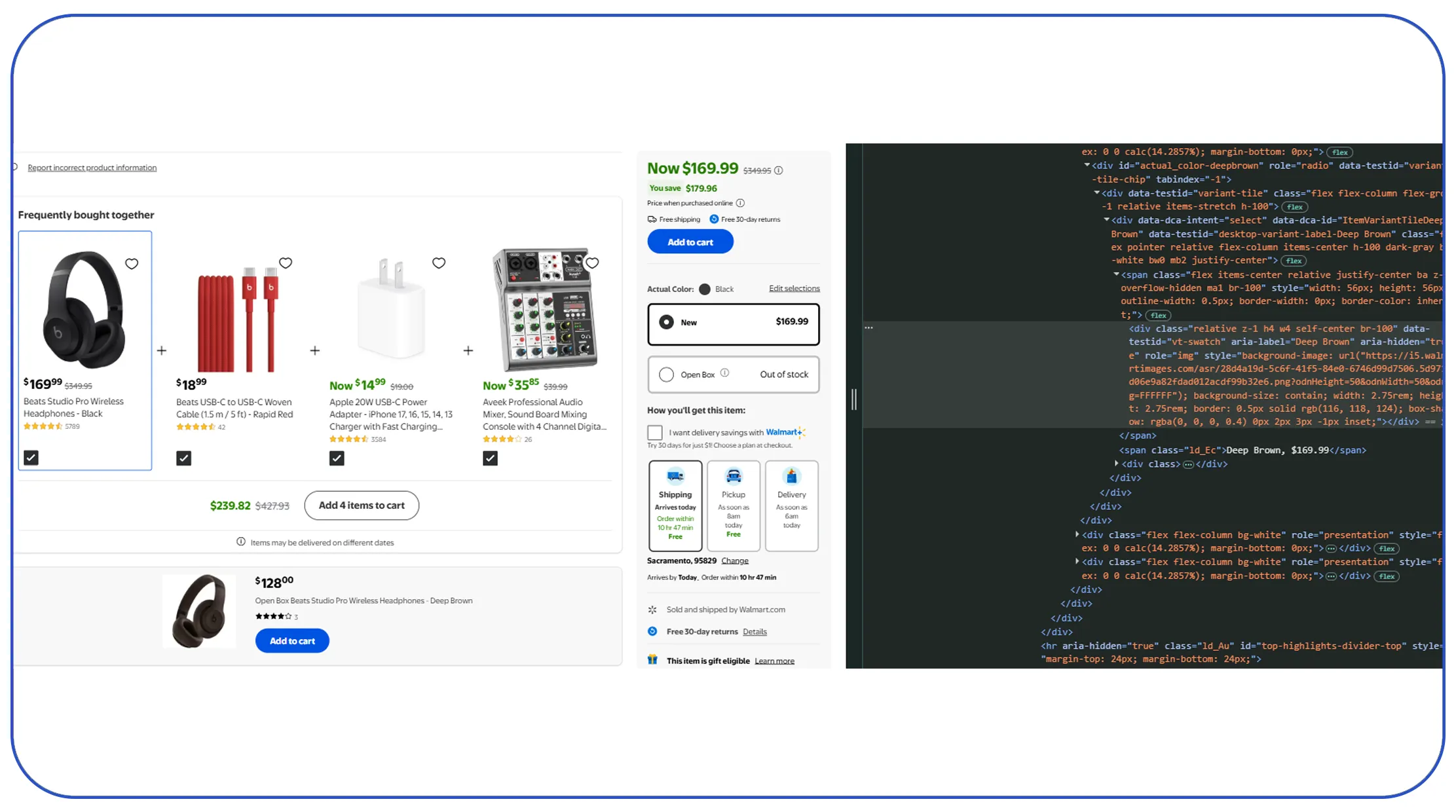

Handling massive data loads requires implementing the best practices for scraping millions of pages while maintaining system stability. High-volume scraping demands a distributed architecture where workloads are balanced across multiple servers to avoid bottlenecks.

From 2020 to 2026, companies adopting distributed scraping systems reported a 45% improvement in uptime and efficiency. Key practices include rotating proxies, implementing request throttling, and using headless browsers for dynamic content. These techniques ensure that scraping operations remain undetected and uninterrupted.

| Year | Pages Scraped Daily (Avg) | System Downtime Reduction |

|---|---|---|

| 2020 | 1–5 million | 20% |

| 2023 | 5–15 million | 35% |

| 2026 (Projected) | 15M+ | 50% |

Another critical factor is respecting website structures and implementing intelligent crawling strategies. This reduces the risk of bans and improves long-term sustainability. By following these best practices, businesses can scale their scraping operations while maintaining efficiency and reliability.

Maximizing Throughput and Speed

To succeed in large-scale data extraction, organizations must optimize scraping performance for millions of requests. Performance optimization involves reducing latency, improving response times, and ensuring efficient resource utilization.

Between 2020 and 2026, optimized scraping systems have achieved up to 60% faster data retrieval compared to traditional methods. Techniques such as asynchronous processing, caching, and load balancing play a significant role in enhancing performance.

| Optimization Method | Benefit | Performance Gain |

|---|---|---|

| Async Requests | Faster execution | +50% |

| Caching | Reduced redundancy | +30% |

| Load Balancing | Even workload distribution | +40% |

Additionally, cloud-based infrastructure enables dynamic scaling, allowing systems to handle spikes in request volumes without compromising speed. By focusing on performance optimization, businesses can ensure that their scraping operations remain efficient even under heavy workloads, delivering timely and accurate data.

Managing Parallel Data Requests

Efficiently handling simultaneous requests requires implementing advanced techniques for high concurrency data scraping. High concurrency ensures that multiple requests are processed in parallel, significantly increasing throughput.

From 2020 to 2026, the adoption of concurrency techniques has grown by over 55%, enabling businesses to process millions of requests in real time. Technologies such as multithreading, multiprocessing, and event-driven architectures are commonly used to achieve this.

| Technique | Function | Efficiency Gain |

|---|---|---|

| Multithreading | Parallel execution | +45% |

| Multiprocessing | CPU-intensive task handling | +50% |

| Event-driven | Non-blocking operations | +60% |

However, high concurrency must be managed carefully to avoid overwhelming target servers. Implementing rate limits and adaptive request scheduling ensures ethical and sustainable scraping practices. By leveraging these techniques, organizations can significantly boost their data extraction capabilities while maintaining system stability.

Enhancing Automation with Intelligent Systems

Modern scraping operations increasingly integrate AI Chatbot technologies to streamline workflows and improve efficiency. AI-driven systems can monitor scraping activities, detect anomalies, and even adjust strategies in real time.

Between 2020 and 2026, the use of AI in data operations has increased by over 65%, enabling smarter automation and reduced manual intervention. AI chatbots can assist in managing scraping tasks, generating reports, and providing insights on system performance.

| AI Capability | Application | Impact Level |

|---|---|---|

| Anomaly Detection | Identify scraping issues | High |

| Auto-Scaling | Adjust resources dynamically | Very High |

| Reporting | Generate insights | High |

These intelligent systems not only improve efficiency but also enhance accuracy by reducing human errors. By integrating AI into scraping workflows, businesses can achieve a higher level of automation and operational excellence.

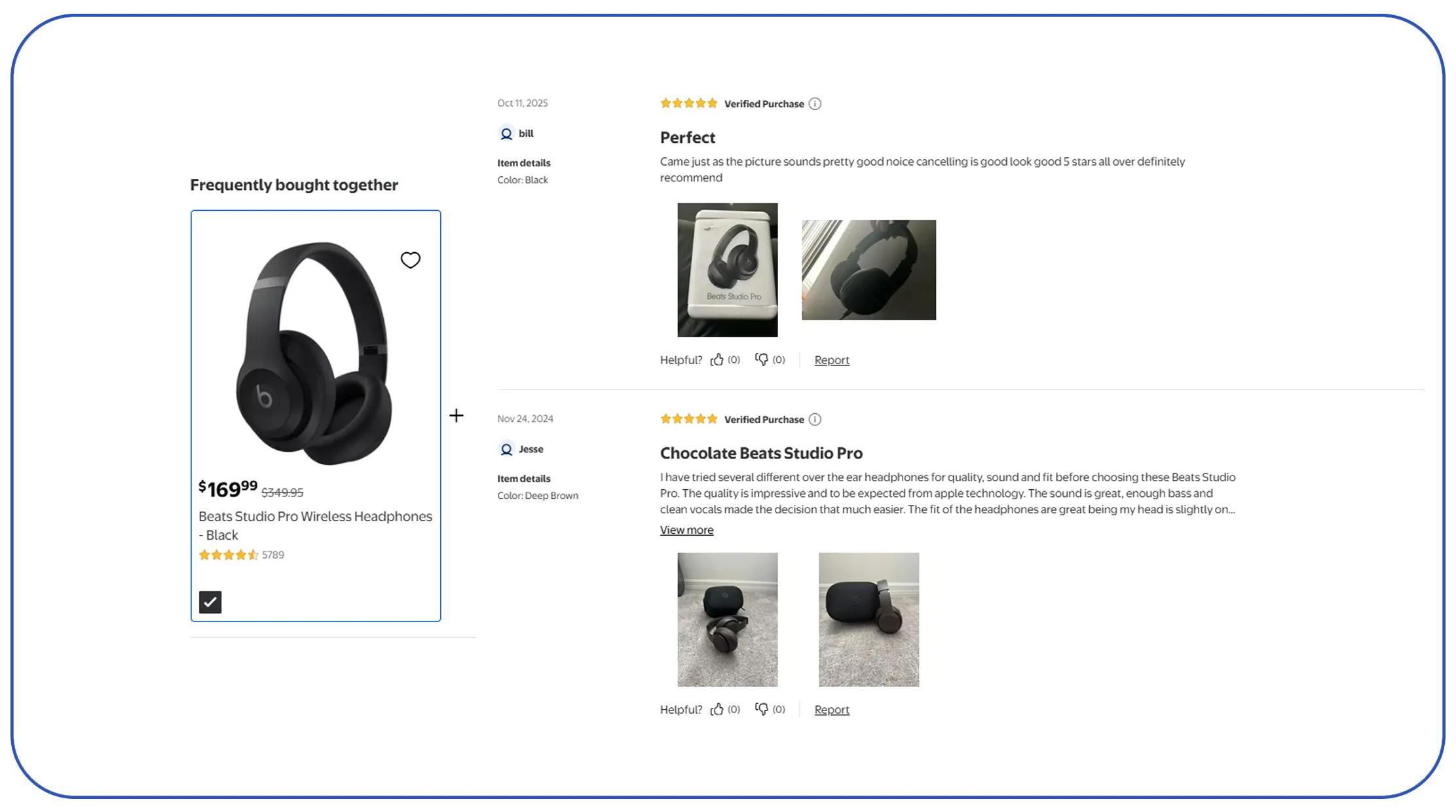

Tracking Changes in Real Time

Continuous monitoring is essential for maintaining data accuracy, making Web Data Monitoring a critical component of large-scale scraping operations. Monitoring systems track changes in web data, ensuring that businesses always have access to the latest information.

From 2020 to 2026, real-time monitoring adoption has grown by 50%, driven by the need for up-to-date insights. Monitoring tools can detect changes in pricing, availability, and content, triggering automated updates in datasets.

| Monitoring Feature | Benefit | Adoption Growth |

|---|---|---|

| Real-time Alerts | Instant updates | +45% |

| Change Detection | Accurate tracking | +50% |

| Data Sync | Consistent datasets | +40% |

By implementing robust monitoring systems, businesses can ensure that their data remains relevant and accurate. This is particularly important for industries like eCommerce and finance, where real-time information is crucial for decision-making.

Leveraging External Expertise for Scale

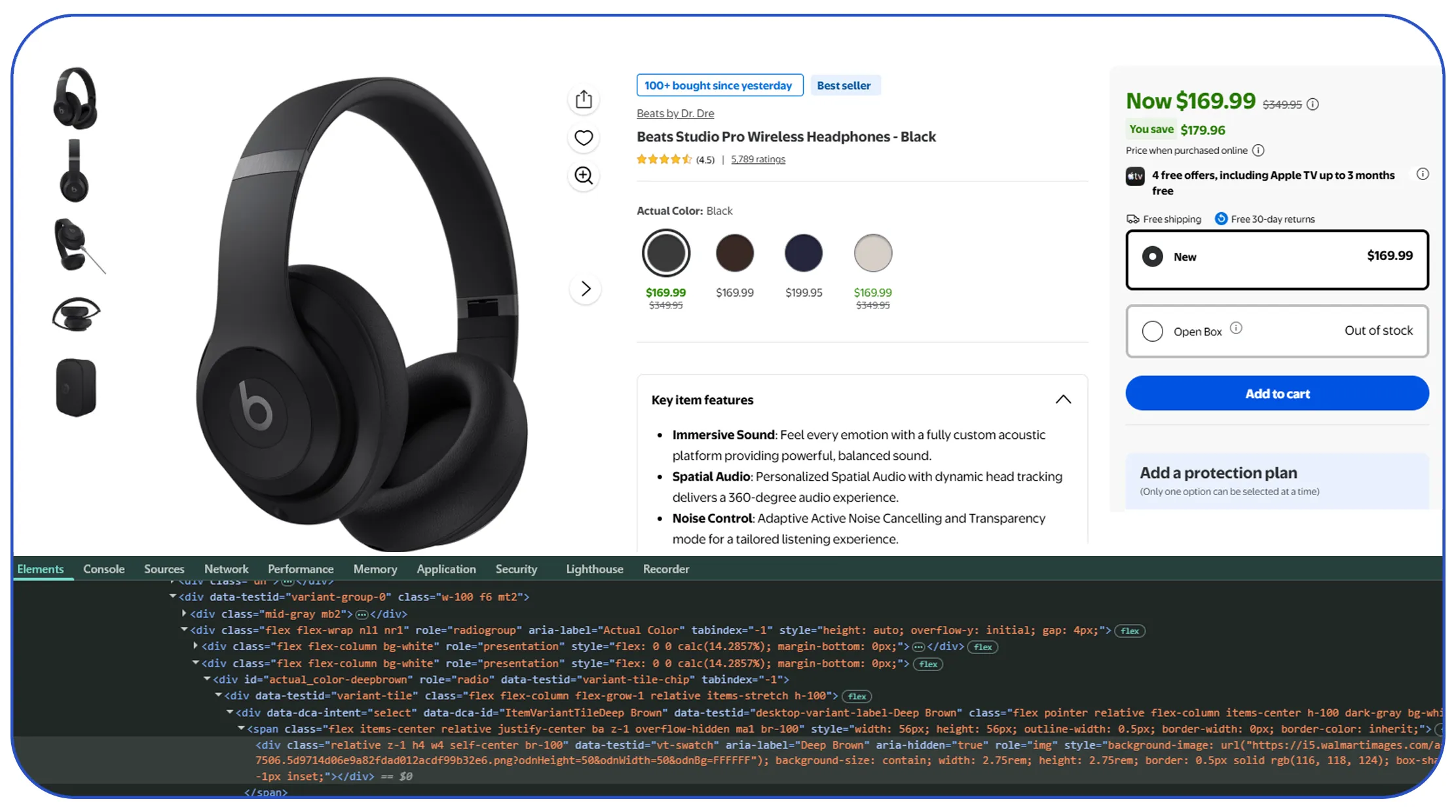

As data demands grow, many organizations turn to Web Scraping Services to manage their high-volume operations. These services provide end-to-end solutions, from data extraction to delivery, ensuring scalability and reliability.

Between 2020 and 2026, the adoption of managed scraping services has increased by over 50%, as businesses seek to reduce infrastructure costs and complexity. These services offer features such as proxy management, CAPTCHA handling, and automated workflows.

| Service Feature | Business Benefit | Growth Rate |

|---|---|---|

| Proxy Rotation | Avoid IP bans | +45% |

| Automation | Reduce manual effort | +50% |

| Real-time Delivery | Faster insights | +55% |

By outsourcing scraping operations, organizations can focus on analyzing data rather than managing infrastructure. This approach not only improves efficiency but also ensures consistent and high-quality data output.

Why Choose Real Data API?

When it comes to scaling operations, Web Scraping to Handle Millions of Requests Efficiently is at the core of Real Data API's offerings. The platform is designed to handle high-frequency requests with ease, providing reliable and structured data for businesses of all sizes.

Real Data API leverages advanced infrastructure, intelligent automation, and robust proxy networks to ensure uninterrupted data extraction. Its solutions are tailored to meet the demands of modern enterprises, enabling them to process millions of requests without performance issues.

With a focus on scalability, accuracy, and speed, Real Data API empowers businesses to transform their data operations and achieve better outcomes through efficient scraping strategies.

Conclusion

In a world driven by data, Web Scraping to Handle Millions of Requests Efficiently is essential for powering high-volume operations. From implementing best practices and optimizing performance to leveraging AI and monitoring systems, every aspect of scraping must be carefully designed for scale.

Organizations that invest in efficient scraping technologies can unlock faster insights, improve decision-making, and gain a competitive edge. As data volumes continue to grow, the ability to handle millions of requests seamlessly will define success in the digital economy.

Ready to scale your data operations? Partner with Real Data API today and unlock the full potential of high-volume web scraping.