Introduction

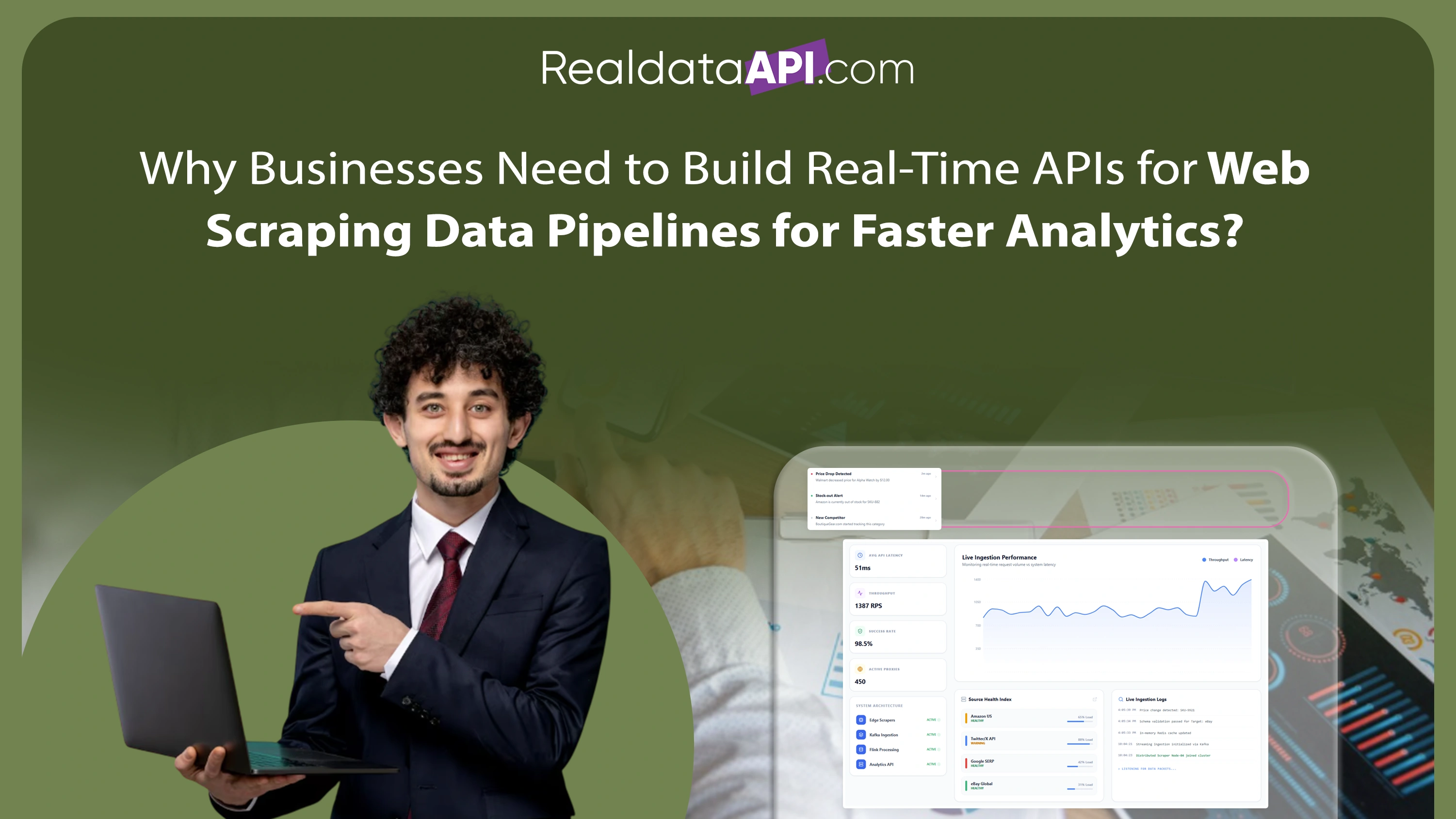

Modern enterprises generate and consume enormous volumes of digital information every day. To remain competitive, organizations must collect, process, and analyze web data in real time while maintaining scalability, security, and operational efficiency. This demand has accelerated the adoption of cloud-based web scraping pipelines using AWS and GCP, enabling businesses to automate large-scale data extraction without investing heavily in on-premise infrastructure.

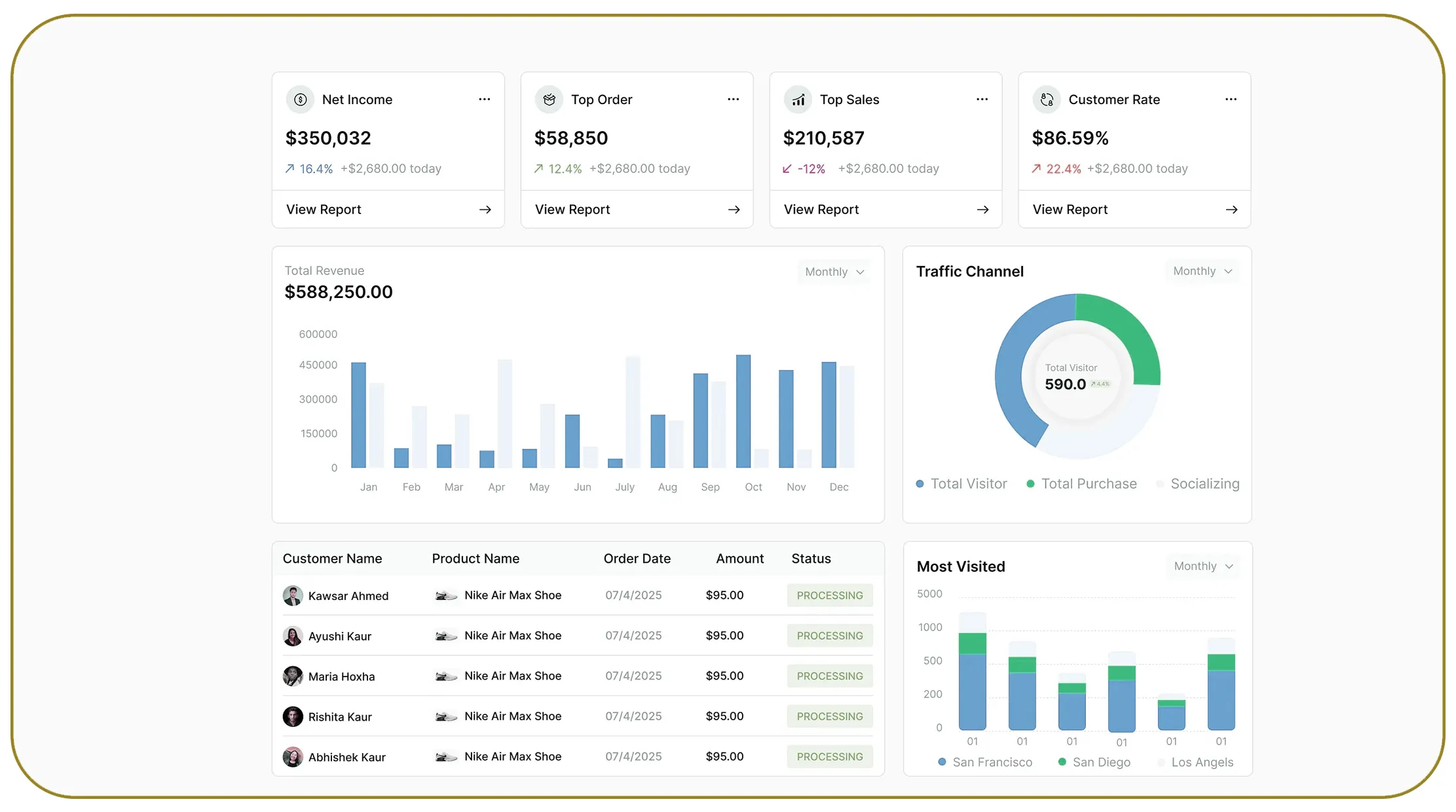

At the same time, the rise of intelligent automation platforms and distributed computing has transformed the role of the Web Scraping API in enterprise ecosystems. APIs now support seamless integration with analytics dashboards, business intelligence tools, AI engines, and machine learning systems.

Between 2020 and 2026, enterprise cloud adoption has increased dramatically, with over 85% of organizations migrating critical workloads to cloud platforms such as AWS and Google Cloud Platform (GCP). Businesses leveraging cloud-native scraping systems report up to 50% improvement in data processing speed, 40% reduction in infrastructure costs, and significantly higher operational flexibility.

Cloud-based scraping pipelines are particularly valuable for industries such as retail, finance, travel, healthcare, and e-commerce, where real-time market intelligence is essential. This blog explores how AWS and GCP-powered scraping architectures are reshaping enterprise automation, enabling scalable data operations, and supporting intelligent decision-making across industries.

Building Modern Data Collection Ecosystems

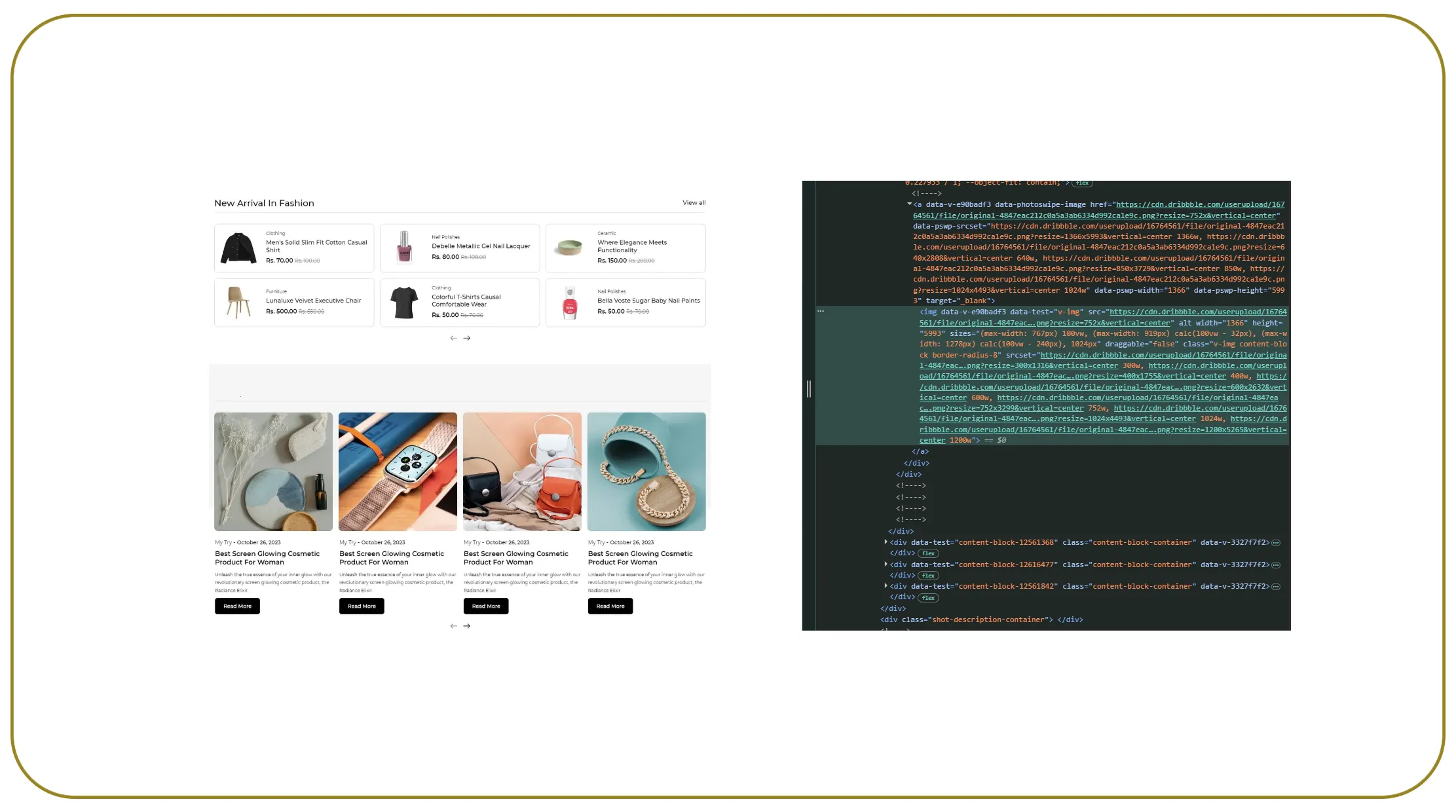

Organizations today require highly scalable systems capable of collecting millions of data points from websites, marketplaces, and online platforms every day. Traditional scraping infrastructure often struggles with scalability, downtime, and processing limitations.

| Year | Enterprise Cloud Adoption | Automated Data Pipeline Adoption |

|---|---|---|

| 2020 | 48% | 32% |

| 2022 | 63% | 49% |

| 2024 | 76% | 68% |

| 2026 | 89% | 83% |

The use of real-time data extraction pipelines in cloud environments has enabled organizations to process live data streams with greater speed and accuracy. AWS Lambda, Amazon S3, Google Cloud Functions, and BigQuery have become essential tools for distributed scraping systems.

Key advantages include:

- Elastic infrastructure scaling

- Reduced maintenance overhead

- Faster deployment cycles

- Improved fault tolerance

- High availability architecture

Between 2020 and 2026, enterprises using cloud-native extraction systems achieved up to 45% lower operational latency and improved data reliability compared to legacy systems.

Transforming Data Workflows Through Intelligent Processing

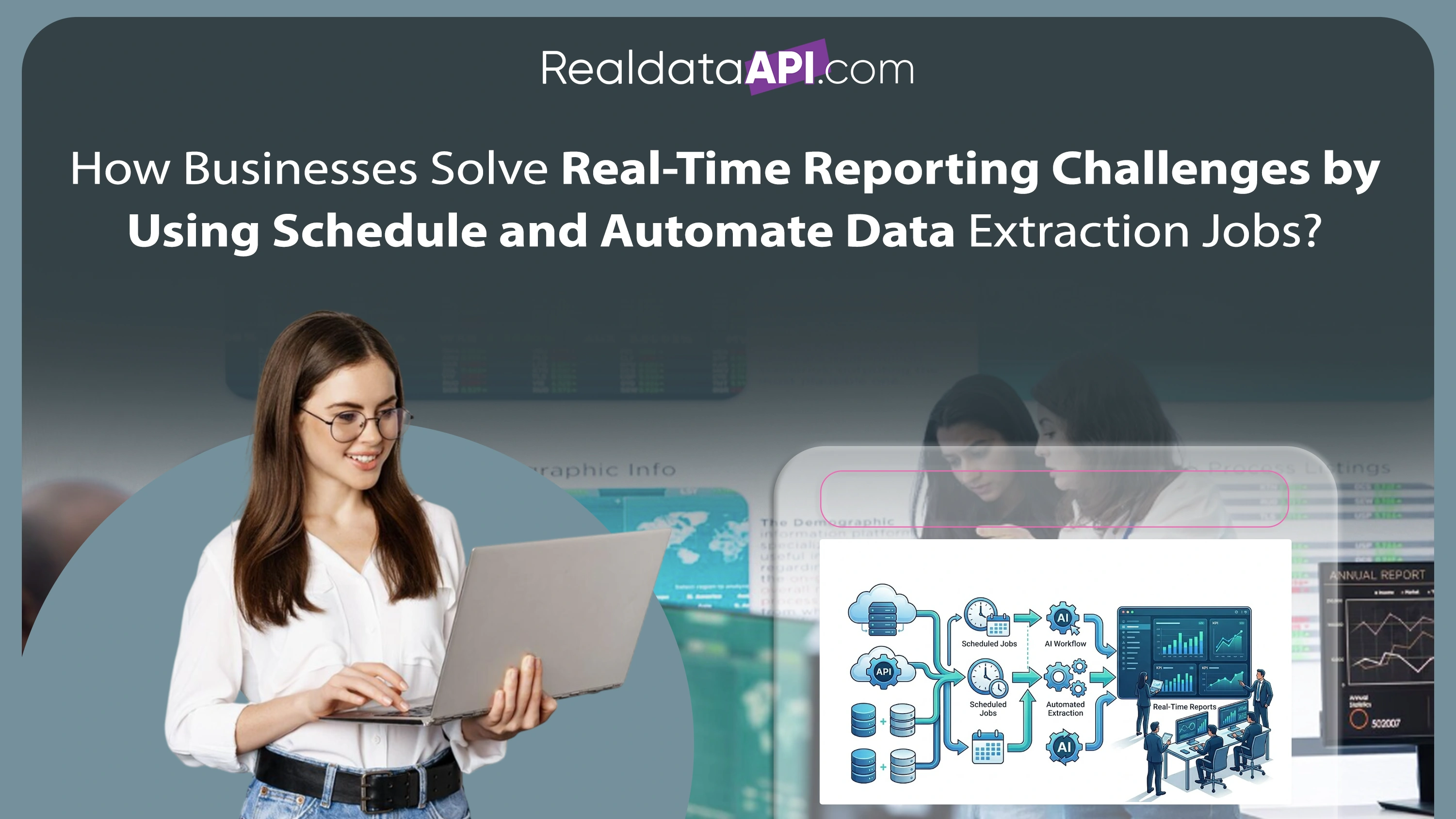

Modern enterprises no longer rely solely on raw scraped data. They require structured, analytics-ready datasets that can be integrated into reporting systems and AI models.

| Data Processing Metric | 2020 | 2023 | 2026 |

|---|---|---|---|

| Average ETL Processing Time | 8 hrs | 3 hrs | <1 hr |

| Data Accuracy Rate | 72% | 86% | 96% |

| Automation Level | 40% | 68% | 90% |

The implementation of cloud-based ETL pipelines for web scraped data enables organizations to automate extraction, transformation, and loading processes in real time.

Cloud ETL systems powered by AWS Glue, Google Dataflow, and Apache Airflow help enterprises:

- Clean and normalize large datasets

- Eliminate duplicate records

- Streamline analytics workflows

- Improve reporting accuracy

- Reduce manual intervention

Between 2020 and 2026, businesses using automated ETL pipelines improved analytics efficiency by more than 55% while reducing operational costs significantly.

Infrastructure Optimization for Large-Scale Operations

Enterprise scraping operations must handle dynamic websites, anti-bot systems, geo-restricted content, and rapidly changing page structures. Building resilient cloud infrastructure is critical for long-term scalability.

The adoption of best practices for cloud-based scraping infrastructure has increased sharply across enterprises seeking stable and compliant data collection systems.

| Infrastructure Capability | 2020 | 2026 |

|---|---|---|

| Containerized Deployments | 28% | 82% |

| Auto-Scaling Systems | 35% | 88% |

| Proxy Rotation Integration | 42% | 91% |

| AI-Based Error Detection | 15% | 70% |

Modern infrastructure best practices include:

- Kubernetes orchestration

- Serverless computing models

- Distributed queue systems

- Centralized logging and monitoring

- Intelligent retry mechanisms

Organizations adopting these strategies have reported up to 60% improvement in scraping uptime and better resilience against dynamic website structures.

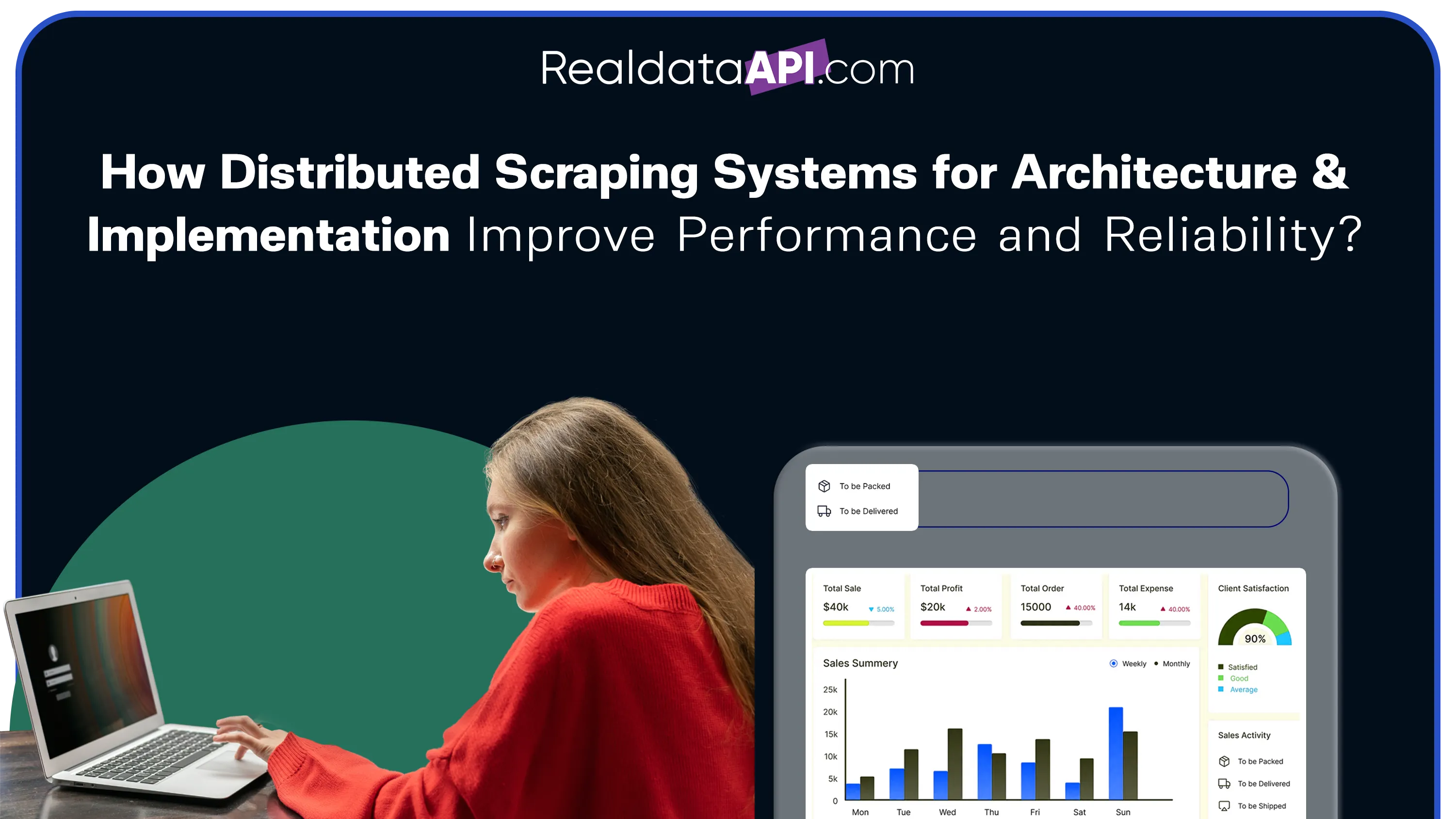

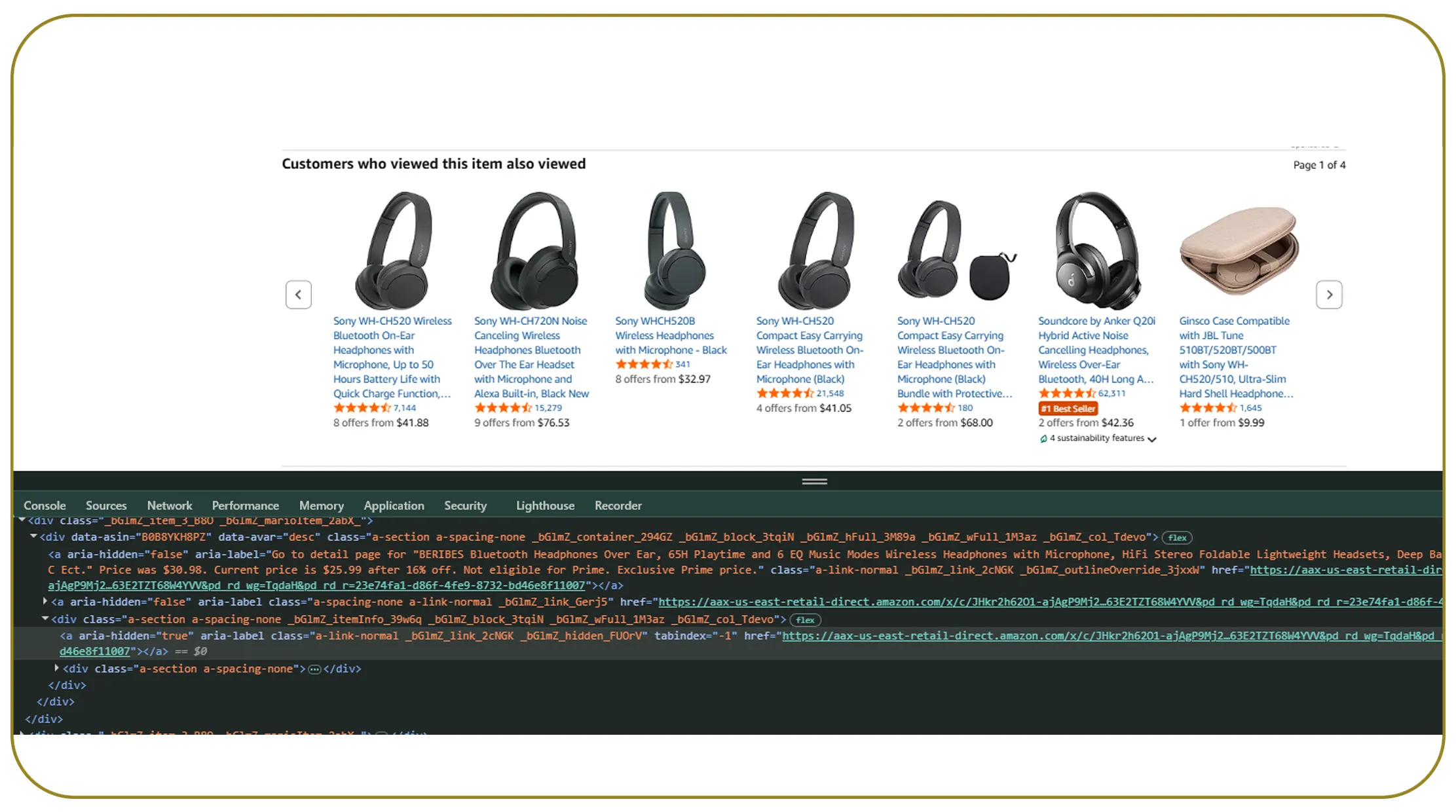

Distributed Architectures for High-Speed Data Collection

Large-scale enterprises require geographically distributed scraping systems to support global data collection operations and reduce latency.

The implementation of a distributed scraping system using AWS and GCP enables businesses to run parallel scraping operations across multiple regions simultaneously.

| Metric | 2020 | 2023 | 2026 |

|---|---|---|---|

| Parallel Scraping Capacity | 5,000 req/min | 50,000 req/min | 250,000 req/min |

| Downtime Reduction | 12% | 6% | 2% |

| Regional Data Processing Speed | Moderate | High | Ultra-fast |

AWS EC2 clusters, GCP Compute Engine, and distributed message queues like Kafka and Pub/Sub help organizations manage millions of requests efficiently.

Key business benefits include:

- Reduced scraping latency

- Improved regional data access

- Faster competitor intelligence gathering

- Better scalability during traffic spikes

Between 2020 and 2026, distributed scraping architectures became standard among enterprises operating across multiple global markets.

Expanding Enterprise Automation Through Managed Services

As enterprise data requirements continue to grow, organizations increasingly prefer managed scraping solutions over maintaining internal infrastructure.

The market for Web Scraping Services has expanded rapidly due to demand for scalable and compliant data collection systems.

| Year | Global Web Scraping Market Size |

|---|---|

| 2020 | $550M |

| 2022 | $820M |

| 2024 | $1.3B |

| 2026 | $2.1B |

Managed services provide:

- End-to-end infrastructure management

- Compliance-focused scraping systems

- Real-time API integrations

- Automated maintenance and scaling

- Enterprise-grade security controls

Organizations outsourcing scraping infrastructure report up to 35% lower operational complexity and faster deployment cycles compared to fully in-house systems.

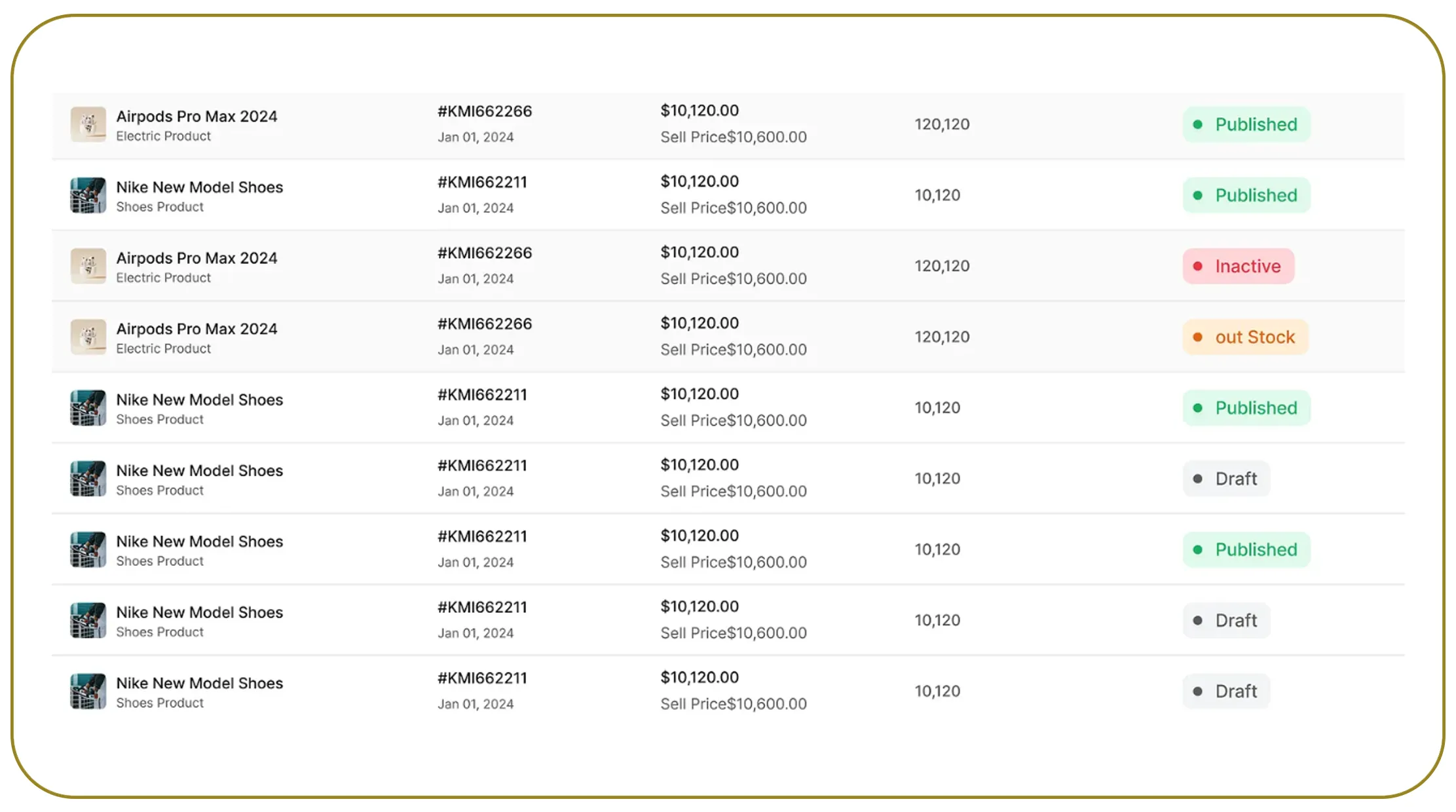

The Rise of Intelligent Enterprise Crawling Systems

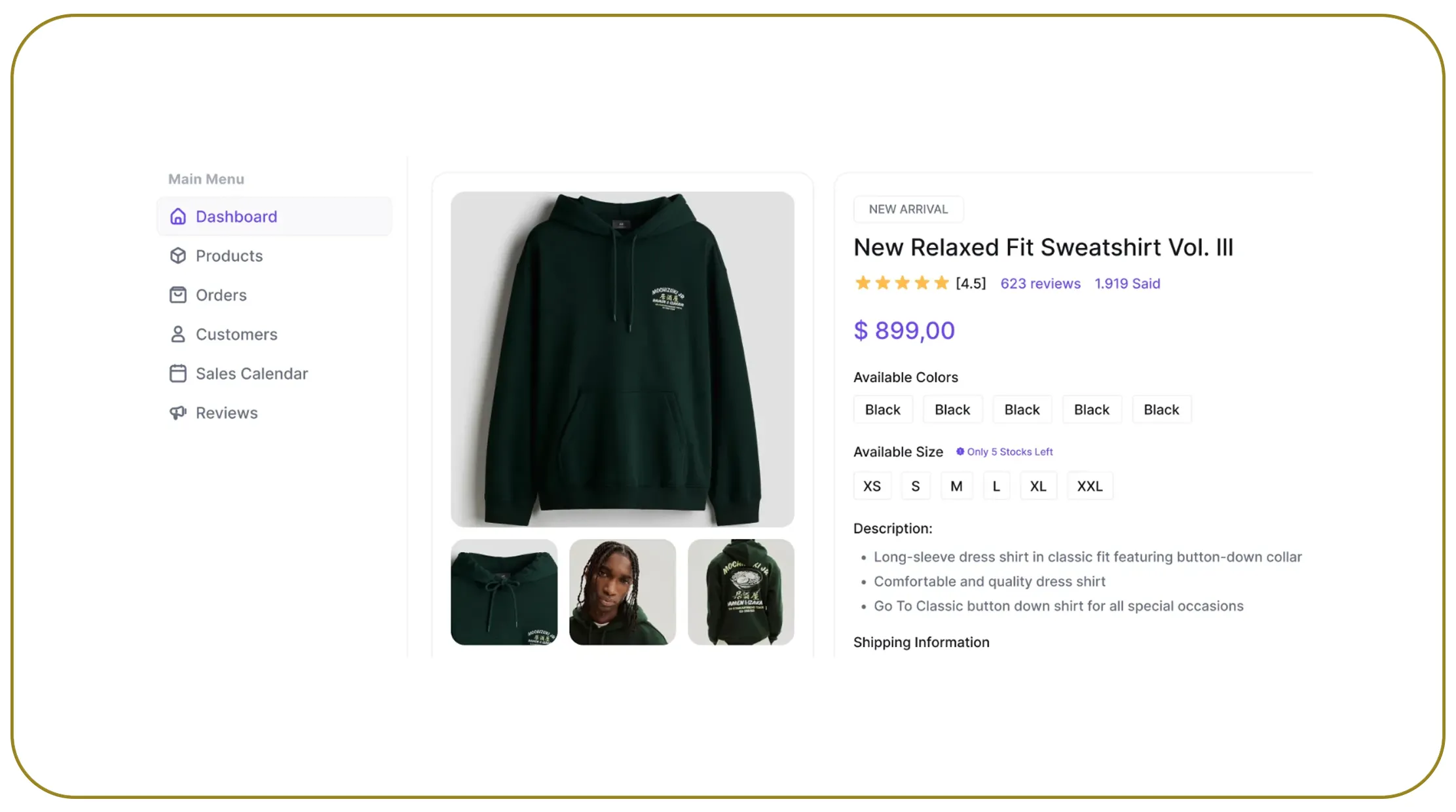

Modern enterprises require advanced crawling systems capable of extracting structured insights from large volumes of websites, product catalogs, and dynamic applications.

Enterprise Web Crawling systems powered by AI and cloud-native technologies are enabling organizations to automate large-scale competitive intelligence operations.

| Capability | 2020 | 2026 |

|---|---|---|

| AI-Powered Content Extraction | 18% | 76% |

| Automated URL Discovery | 35% | 85% |

| Real-Time Monitoring | 22% | 88% |

Advanced crawling systems support:

- Dynamic page rendering

- Multi-language content extraction

- Structured metadata analysis

- Continuous monitoring of competitor websites

Between 2020 and 2026, enterprises adopting intelligent crawling systems improved market intelligence accuracy by more than 48%.

Why Choose Real Data API?

Modern enterprises require scalable, reliable, and secure data extraction solutions capable of handling high-volume workloads across industries.

Web Scraping Datasets provided by Real Data API help organizations gain instant access to structured, analytics-ready data from multiple online sources.

With expertise in cloud-based web scraping pipelines using AWS and GCP, Real Data API delivers enterprise-grade scraping infrastructure designed for scalability and automation.

Key capabilities include:

- Distributed cloud-native scraping systems

- Real-time API-based data delivery

- AI-powered extraction and parsing

- Automated ETL workflows

- Enterprise compliance and monitoring systems

- High-performance crawling architecture

Real Data API empowers businesses to streamline automation workflows, improve analytics accuracy, and reduce infrastructure complexity through intelligent cloud-based scraping solutions.

Conclusion

The future of enterprise automation depends heavily on scalable, intelligent, and cloud-native data extraction systems. Organizations that leverage AWS and GCP-powered scraping pipelines gain significant advantages in operational efficiency, scalability, and real-time decision-making.

As digital ecosystems continue to expand, cloud-based web scraping pipelines using AWS and GCP are becoming foundational technologies for modern enterprises seeking competitive intelligence and automated analytics workflows.

From distributed architectures to intelligent ETL systems and enterprise crawling frameworks, cloud-native scraping infrastructure is reshaping how organizations collect and process online data. Businesses adopting these technologies can achieve faster insights, lower operational costs, and improved business agility.

Real Data API helps enterprises build high-performance scraping ecosystems that support automation, scalability, and long-term digital transformation goals.

Connect with Real Data API today to build scalable cloud-based data pipelines and transform enterprise automation with real-time web intelligence solutions!