Introduction

Modern websites are increasingly built using JavaScript frameworks such as React, Angular, and Vue.js, making data extraction far more complex than traditional HTML scraping. Static scrapers often fail to capture dynamically rendered content, delayed API responses, or interactive elements loaded after page rendering. To overcome these challenges, businesses now rely on advanced automation frameworks to scrape JavaScript-heavy websites step by step and access accurate, real-time web data.

At the same time, the evolution of the Web Scraping API has transformed enterprise data collection by enabling scalable extraction of dynamic content across industries such as e-commerce, travel, finance, healthcare, and real estate. Organizations increasingly depend on intelligent scraping systems capable of rendering JavaScript, executing browser actions, and collecting structured datasets from modern web applications.

Between 2020 and 2026, the percentage of websites using heavy client-side rendering increased from approximately 38% to over 72%, significantly impacting traditional scraping approaches. Businesses adopting browser automation and cloud-native scraping solutions reported up to 60% improvement in extraction accuracy and 45% faster data processing capabilities.

This blog explores step-by-step strategies, tools, and enterprise methodologies for scraping JavaScript-driven websites efficiently while ensuring scalability, accuracy, and operational reliability.

Understanding the Shift Toward Dynamic Web Architectures

Modern websites rely heavily on asynchronous content loading, browser-side rendering, and API-driven architectures. Traditional HTML parsers cannot effectively process these environments because critical data is loaded after the initial page request.

| Year | Websites Using Heavy JavaScript Rendering | Traditional Scraper Failure Rate |

|---|---|---|

| 2020 | 38% | 32% |

| 2022 | 49% | 45% |

| 2024 | 61% | 58% |

| 2026 | 72% | 69% |

To overcome these limitations, organizations use advanced techniques to handle dynamic websites in web scraping. These methods enable browser automation tools to fully render pages before extracting content.

Key techniques include:

- Headless browser rendering

- JavaScript execution monitoring

- Delayed page loading management

- Dynamic DOM interaction

- Session and cookie handling

Between 2020 and 2026, enterprises implementing dynamic rendering solutions improved extraction reliability by over 50%, especially in industries dependent on frequently updated data.

Extracting Data from Asynchronous Web Applications

Many modern websites rely on AJAX requests to fetch data dynamically without reloading pages. This architecture enhances user experience but complicates traditional scraping workflows.

| Metric | 2020 | 2023 | 2026 |

|---|---|---|---|

| AJAX-Based Websites | 42% | 58% | 76% |

| Dynamic API Requests Per Page | 12 | 28 | 46 |

| Real-Time Data Usage | 35% | 61% | 83% |

The ability to extract data from AJAX-based websites has become essential for businesses collecting real-time market intelligence and competitor insights.

Modern extraction systems use tools such as:

- Selenium

- Playwright

- Puppeteer

- Browser DevTools Protocol

- Network request interception systems

These technologies allow scrapers to monitor API calls, capture JSON responses, and retrieve structured data directly from asynchronous requests. Organizations using AJAX-aware extraction frameworks reported up to 48% faster data collection performance between 2020 and 2026.

Automating Interactions with Modern Web Applications

Single-page applications (SPAs) present additional challenges because content updates dynamically without full page reloads. Navigation often occurs entirely within browser memory, making URL-based extraction insufficient.

The rise of scraping single page applications using browser automation has enabled enterprises to interact with dynamic interfaces as real users would.

| Browser Automation Capability | 2020 | 2026 |

|---|---|---|

| Automated Click Actions | 48% | 92% |

| Infinite Scroll Handling | 32% | 88% |

| Dynamic Element Detection | 28% | 85% |

Browser automation frameworks support:

- Simulated user interactions

- Form submissions

- Pagination handling

- Infinite scrolling

- Dynamic content rendering

Businesses using browser automation for SPA extraction improved extraction accuracy by more than 55% while significantly reducing missing or incomplete datasets.

Scaling Dynamic Data Collection Through Managed Solutions

As websites become increasingly sophisticated, many enterprises prefer outsourcing dynamic scraping infrastructure to specialized providers instead of building complex systems internally.

The demand for Web Scraping Services has grown rapidly due to increasing complexity in website architectures and anti-bot technologies.

| Year | Managed Scraping Market Size |

|---|---|

| 2020 | $620M |

| 2022 | $980M |

| 2024 | $1.6B |

| 2026 | $2.5B |

Managed scraping services provide:

- Browser rendering infrastructure

- Proxy and IP rotation systems

- CAPTCHA-solving integrations

- Cloud-based scaling capabilities

- Automated maintenance and updates

Organizations using managed services reduced infrastructure costs by approximately 35% while improving operational scalability and extraction consistency.

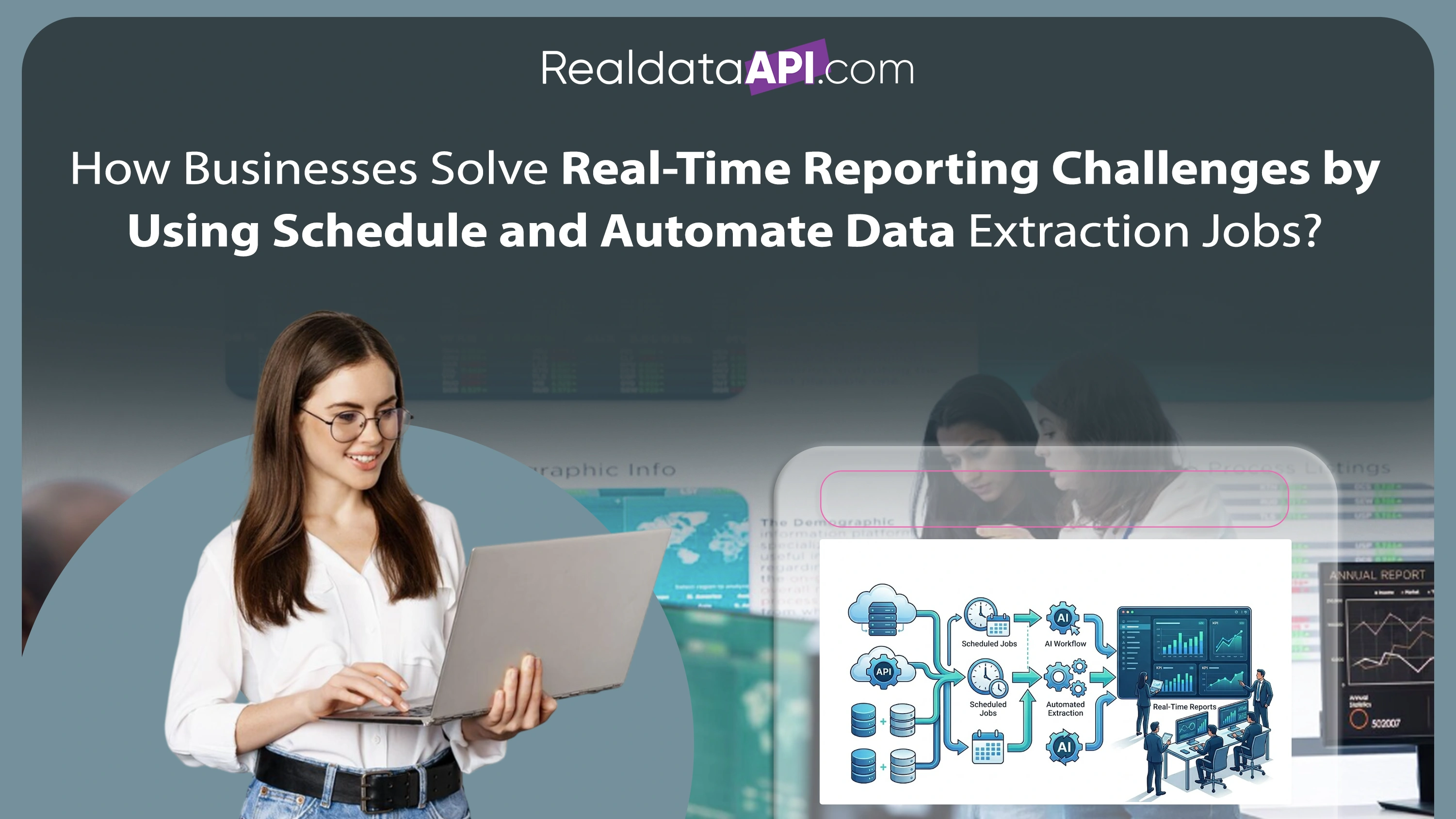

Building Large-Scale Crawling Ecosystems

Enterprise organizations often require continuous monitoring of thousands of dynamic websites simultaneously. This demands highly scalable crawling systems capable of processing large volumes of rendered content efficiently.

Enterprise Web Crawling systems powered by distributed cloud infrastructure enable organizations to monitor dynamic platforms continuously.

| Enterprise Crawling Metric | 2020 | 2026 |

|---|---|---|

| Concurrent Crawling Capacity | 5,000 pages/hr | 120,000 pages/hr |

| Real-Time Monitoring Adoption | 22% | 81% |

| AI-Assisted Crawling Systems | 15% | 68% |

Modern enterprise crawling architectures support:

- Distributed browser clusters

- Parallel rendering systems

- Real-time monitoring workflows

- AI-driven URL prioritization

- Automated scaling infrastructure

Between 2020 and 2026, enterprises adopting intelligent crawling systems improved reporting speed by nearly 52%.

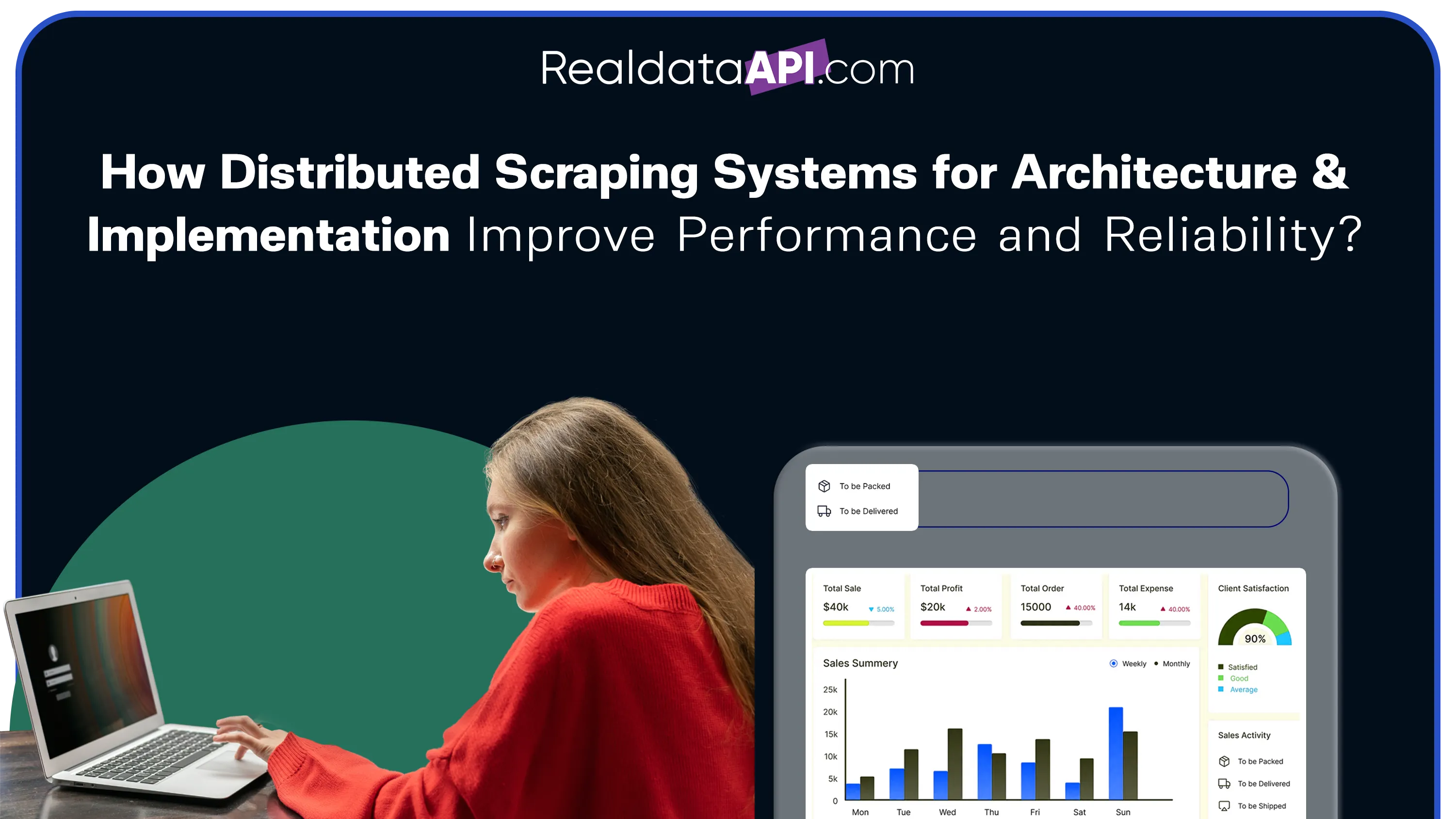

Structuring Data for Analytics and Business Intelligence

Dynamic websites generate large amounts of raw and unstructured information. Extracted content must be cleaned, normalized, and structured before it becomes valuable for analytics workflows.

The growing demand for high-quality Web Scraping Datasets has transformed how organizations consume scraped data for business intelligence and AI applications.

| Data Quality Metric | 2020 | 2026 |

|---|---|---|

| Structured Data Usage | 42% | 88% |

| Automated Data Cleaning | 28% | 79% |

| AI-Ready Dataset Generation | 18% | 72% |

Modern dataset pipelines support:

- JSON and CSV formatting

- AI-ready structured outputs

- Real-time analytics integration

- Duplicate elimination

- Metadata enrichment

Organizations using structured scraping datasets improved analytics efficiency by over 45% and reduced reporting errors significantly.

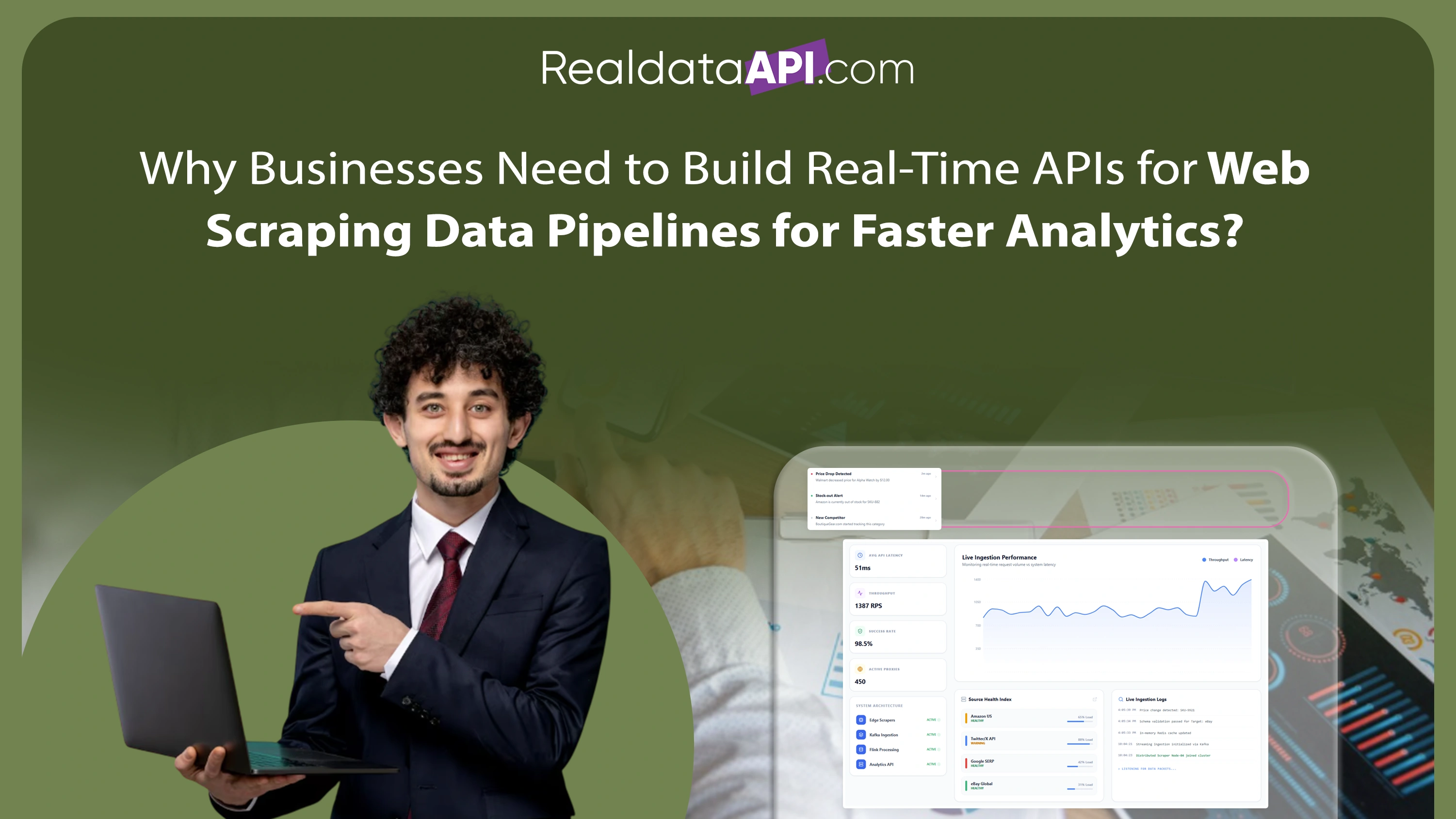

Why Choose Real Data API?

Modern enterprises require intelligent and scalable systems capable of handling dynamic, JavaScript-driven websites efficiently.

Real Data API helps organizations scrape JavaScript-heavy websites step by step using advanced browser automation, distributed cloud infrastructure, and AI-powered extraction systems.

Key capabilities include:

- JavaScript rendering support

- Browser automation frameworks

- AJAX and API request interception

- Cloud-native scraping infrastructure

- Enterprise-grade crawling systems

- Structured dataset delivery pipelines

Real Data API enables businesses to extract accurate, real-time data from modern web applications while reducing operational complexity and improving scalability.

Conclusion

The growing complexity of modern web applications has made traditional scraping techniques insufficient for enterprise-scale data extraction. Businesses must now adopt advanced rendering and automation systems capable of interacting with JavaScript-heavy environments effectively.

By learning how to scrape JavaScript-heavy websites step by step, organizations can unlock valuable real-time insights from dynamic platforms across industries such as retail, travel, finance, healthcare, and e-commerce.

From AJAX-based extraction to browser automation and enterprise crawling infrastructure, modern scraping technologies are transforming how businesses collect and process digital intelligence. Organizations implementing these solutions gain significant advantages in reporting accuracy, scalability, and competitive intelligence.

Real Data API empowers enterprises with intelligent scraping frameworks, browser automation systems, and scalable infrastructure designed for dynamic web environments.

Connect with Real Data API today to build scalable JavaScript scraping solutions and unlock accurate real-time intelligence from modern web applications!