Introduction

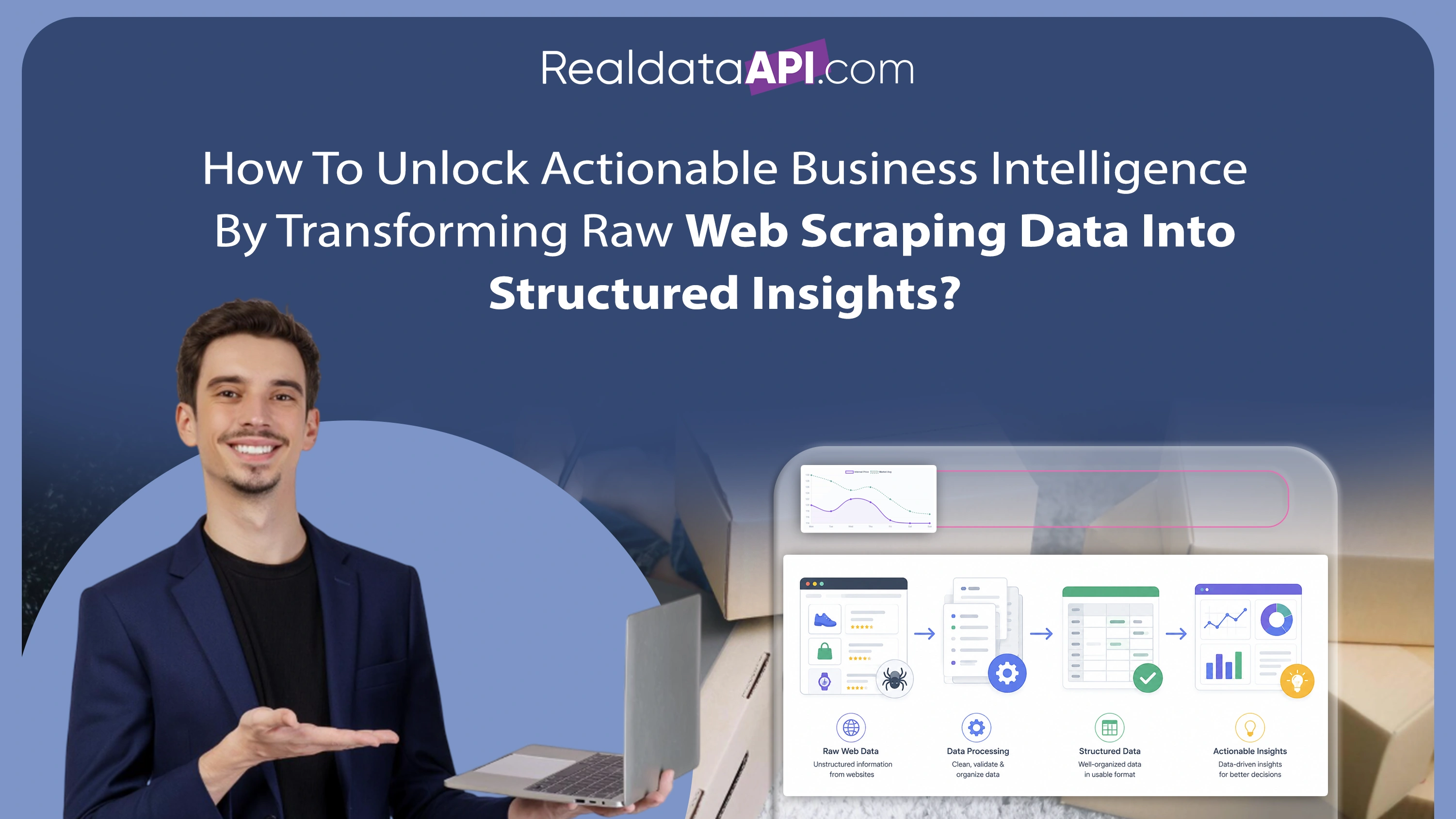

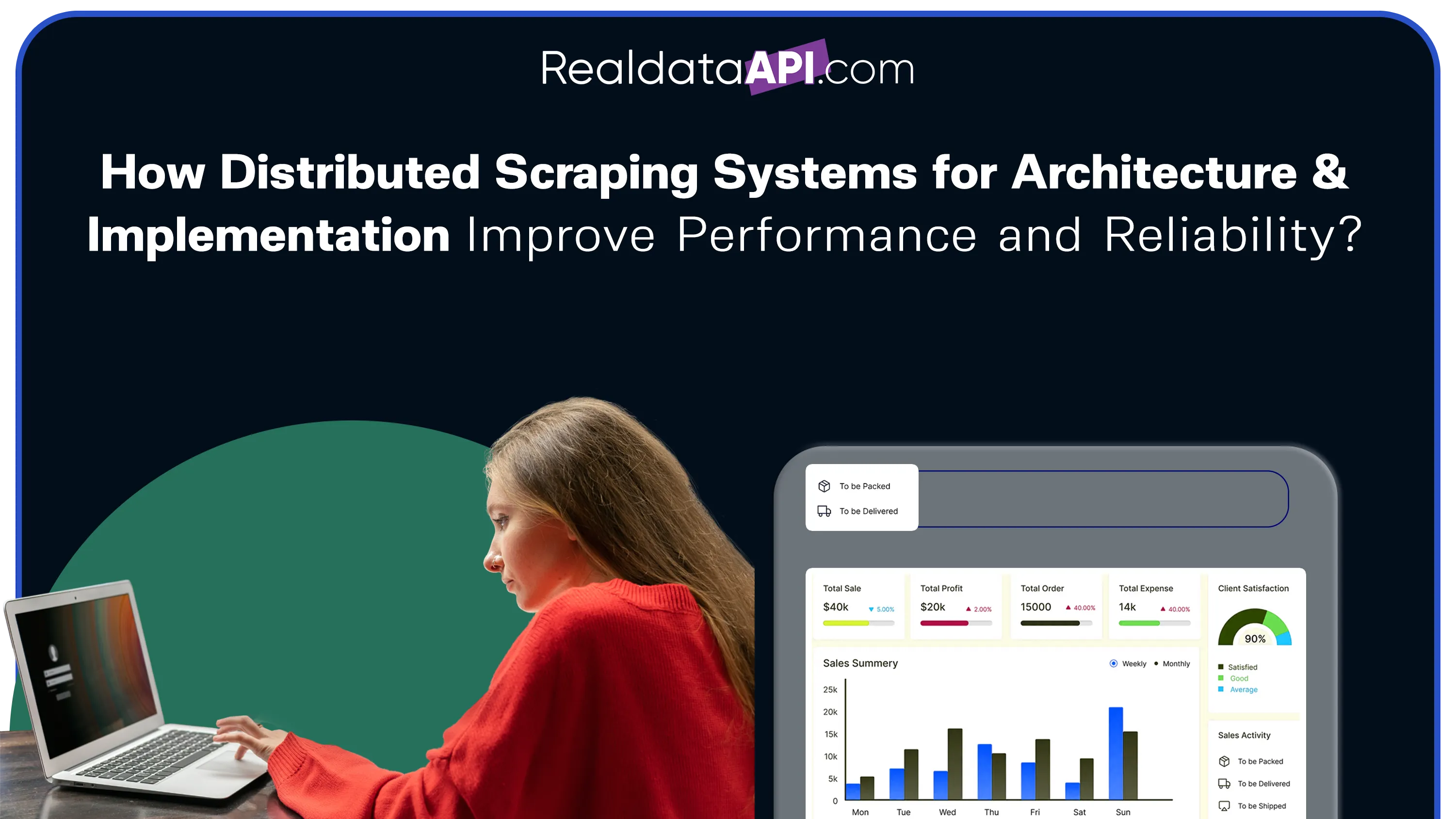

In today's data-intensive environment, Distributed Scraping Systems for Architecture & Implementation have become essential for organizations handling massive volumes of web data. Traditional single-node scraping approaches struggle with scalability, speed, and reliability, especially when dealing with millions of requests. By leveraging distributed architectures, businesses can divide workloads across multiple nodes, ensuring faster processing and reduced downtime. Modern Web Scraping Services integrate these systems to deliver consistent, high-performance data extraction at scale.

Between 2020 and 2026, the adoption of distributed systems in data engineering has grown by over 65%, driven by the need for real-time insights and scalable infrastructure. These systems not only improve efficiency but also enhance fault tolerance, ensuring uninterrupted operations even during failures.

This blog explores how distributed scraping systems enhance performance and reliability, the technologies behind them, and how businesses can implement these solutions to achieve scalable and efficient data pipelines.

Scaling Data Extraction Across Multiple Nodes

Implementing large scale data scraping using distributed systems allows organizations to handle massive workloads efficiently. By distributing tasks across multiple servers, businesses can process large datasets simultaneously, reducing execution time and improving throughput.

From 2020 to 2026, companies adopting distributed scraping have reported up to a 55% increase in data processing speed. This approach ensures that no single node becomes a bottleneck, enabling seamless scaling as data requirements grow.

| Year | Data Volume Processed | Speed Improvement |

|---|---|---|

| 2020 | 1–10 TB | +30% |

| 2023 | 10–50 TB | +45% |

| 2026 (Projected) | 50+ TB | +60% |

Distributed systems also enable horizontal scaling, allowing businesses to add more nodes as needed. This flexibility ensures that data extraction processes remain efficient and reliable, even under heavy workloads.

Enhancing Throughput with Parallel Execution

The use of web scraping using parallel processing and load balancing significantly improves performance by enabling simultaneous execution of tasks. Parallel processing divides workloads into smaller units, while load balancing ensures even distribution across nodes.

Between 2020 and 2026, organizations implementing parallel processing have achieved up to 70% faster data extraction rates. Load balancing further enhances efficiency by preventing overloading of individual nodes.

| Technique | Function | Efficiency Gain |

|---|---|---|

| Parallel Processing | Execute tasks simultaneously | +65% |

| Load Balancing | Distribute workload evenly | +50% |

| Task Scheduling | Optimize execution order | +40% |

These techniques ensure optimal resource utilization and minimize latency. By combining parallel processing with load balancing, businesses can achieve high-performance scraping operations that scale effortlessly.

Ensuring Stability with Resilient Systems

Implementing Scrape proxy rotation and fault tolerance is crucial for maintaining reliability in distributed scraping systems. Proxy rotation helps avoid IP bans, while fault tolerance ensures that tasks are automatically reassigned in case of failures.

From 2020 to 2026, businesses using advanced fault-tolerant systems have reduced downtime by over 50%. These systems detect failures in real time and reroute tasks to active nodes, ensuring uninterrupted operations.

| Feature | Benefit | Impact Level |

|---|---|---|

| Proxy Rotation | Avoid detection and blocking | High |

| Fault Tolerance | Maintain system stability | Very High |

| Auto Recovery | Restart failed tasks | High |

By integrating these features, organizations can ensure consistent data extraction even in challenging environments. This enhances both reliability and efficiency.

Designing Robust Data Architectures

A well-defined architecture for big data extraction analysis is essential for managing distributed scraping systems effectively. This architecture typically includes data ingestion, processing, storage, and analytics layers.

Between 2020 and 2026, organizations adopting advanced data architectures have improved processing efficiency by 60%. These architectures enable seamless integration of scraping pipelines with analytics platforms.

| Architecture Layer | Function | Efficiency Gain |

|---|---|---|

| Ingestion | Collect data from sources | +50% |

| Processing | Clean and transform data | +60% |

| Storage | Store structured datasets | +45% |

A robust architecture ensures that data flows smoothly from extraction to analysis. This enables businesses to derive actionable insights quickly and efficiently.

Leveraging Cloud Infrastructure for Scalability

Adopting cloud based data scraping solutions allows businesses to scale their operations dynamically. Cloud platforms provide on-demand resources, enabling organizations to handle fluctuating workloads without investing in physical infrastructure.

From 2020 to 2026, cloud adoption in data scraping has grown by over 70%, driven by the need for flexibility and scalability.

| Cloud Feature | Benefit | Growth Rate |

|---|---|---|

| Auto Scaling | Adjust resources dynamically | +65% |

| Cost Efficiency | Pay-as-you-go model | +60% |

| Global Access | Distributed data collection | +55% |

Cloud-based solutions ensure high availability and reliability, making them ideal for distributed scraping systems. This approach enables businesses to scale efficiently while maintaining performance.

Automating Workflows for Efficiency

Integrating Robotic Process Automation into distributed scraping systems enhances efficiency by automating repetitive tasks. RPA tools can manage data extraction, processing, and reporting workflows with minimal human intervention.

Between 2020 and 2026, RPA adoption has increased by 65%, enabling faster and more accurate data operations.

| Automation Feature | Application | Impact Level |

|---|---|---|

| Task Automation | Reduce manual effort | High |

| Workflow Management | Streamline processes | Very High |

| Error Handling | Minimize disruptions | High |

By automating workflows, businesses can improve productivity and reduce operational costs. This ensures that distributed scraping systems remain efficient and scalable.

Why Choose Real Data API?

When it comes to delivering scalable solutions, AI Chatbot, Distributed Scraping Systems for Architecture & Implementation are at the core of Real Data API's offerings. The platform combines advanced distributed architectures with intelligent automation to provide reliable and high-performance data extraction.

Real Data API leverages cutting-edge technologies such as AI-driven monitoring, proxy management, and cloud infrastructure to ensure seamless operations. Its solutions are designed to handle high-volume data requirements while maintaining accuracy and efficiency.

With a focus on innovation and scalability, Real Data API empowers businesses to build robust data pipelines and achieve better outcomes.

Conclusion

In a rapidly evolving digital landscape, Web Data Monitoring, Distributed Scraping Systems for Architecture & Implementation play a critical role in ensuring efficient and reliable data extraction. By leveraging distributed architectures, parallel processing, and automation, businesses can handle massive workloads with ease.

From improving performance to enhancing fault tolerance, these systems provide the foundation for scalable data operations. As data volumes continue to grow, adopting distributed scraping solutions will be essential for staying competitive.

Ready to transform your data operations? Partner with Real Data API today and unlock the full potential of distributed scraping systems.