Introduction

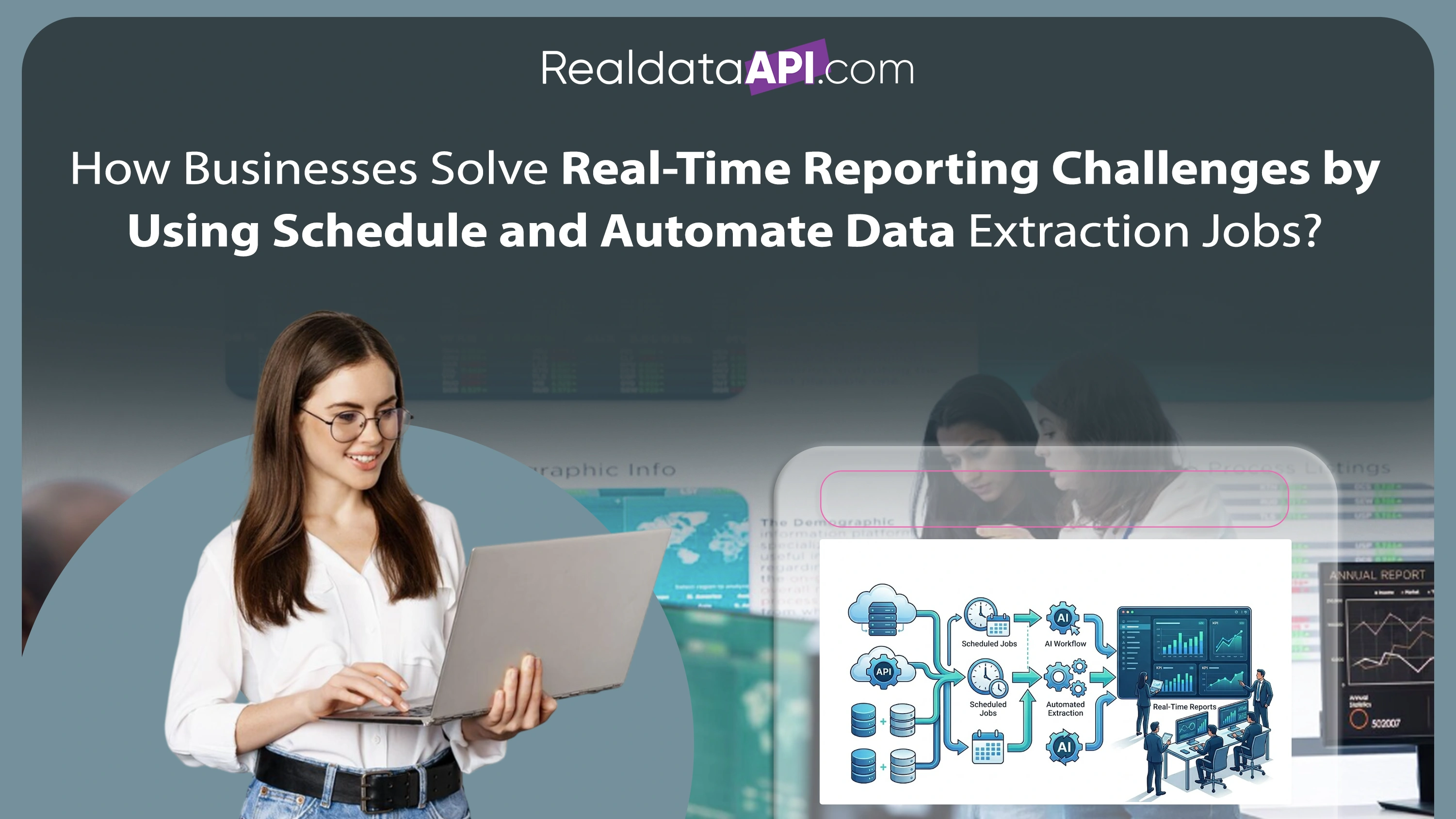

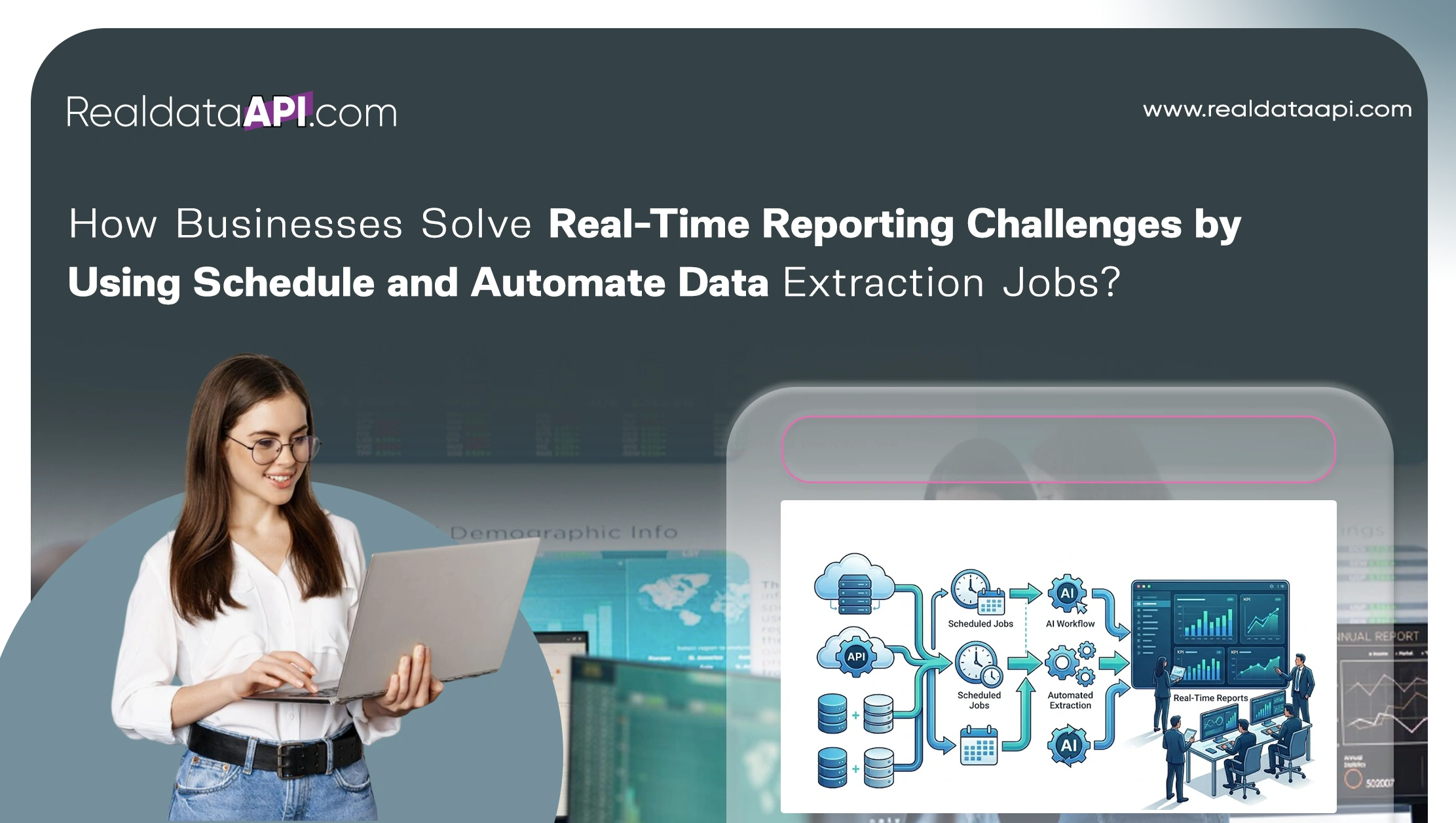

Modern enterprises operate in highly dynamic digital environments where decisions depend on timely and accurate information. Manual reporting systems are no longer sufficient to manage rapidly changing market conditions, competitor activities, and customer behavior patterns. Organizations now increasingly schedule and automate data extraction jobs to eliminate reporting delays, reduce operational inefficiencies, and ensure continuous access to real-time insights.

The growing demand for scalable automation has also accelerated the adoption of the Web Scraping API, enabling enterprises to integrate live data streams directly into analytics platforms, dashboards, and business intelligence systems. Automated extraction pipelines now play a critical role in sectors such as retail, finance, healthcare, travel, logistics, and e-commerce.

Between 2020 and 2026, enterprise automation adoption increased significantly, with more than 82% of businesses integrating automated data workflows into their reporting infrastructure. Companies leveraging intelligent extraction systems reported up to 55% faster reporting cycles, 40% lower manual workload, and improved operational accuracy.

Real-time data automation enables businesses to monitor competitor pricing, product availability, customer reviews, and market trends continuously without manual intervention. This blog explores how intelligent scheduling and automation systems help organizations solve reporting challenges while improving scalability, efficiency, and business performance.

Accelerating Reporting Through Intelligent Scheduling Systems

Enterprises handling large-scale web data operations require advanced scheduling systems capable of executing thousands of extraction tasks automatically. Manual scraping workflows often result in delayed insights, inconsistent data quality, and operational bottlenecks.

| Year | Automated Reporting Adoption | Manual Reporting Dependency |

|---|---|---|

| 2020 | 38% | 62% |

| 2022 | 52% | 48% |

| 2024 | 71% | 29% |

| 2026 | 86% | 14% |

The use of best tools for scheduling scraping jobs at scale enables organizations to automate recurring extraction tasks while maintaining reliability and performance.

Popular enterprise scheduling solutions include:

- Apache Airflow

- Kubernetes CronJobs

- AWS EventBridge

- Google Cloud Scheduler

- Jenkins automation workflows

These technologies help enterprises:

- Reduce reporting delays

- Improve workflow consistency

- Minimize human intervention

- Support real-time monitoring operations

Between 2020 and 2026, enterprises adopting automated scheduling systems achieved up to 48% faster analytics delivery and improved operational scalability significantly.

Streamlining Enterprise Data Pipelines

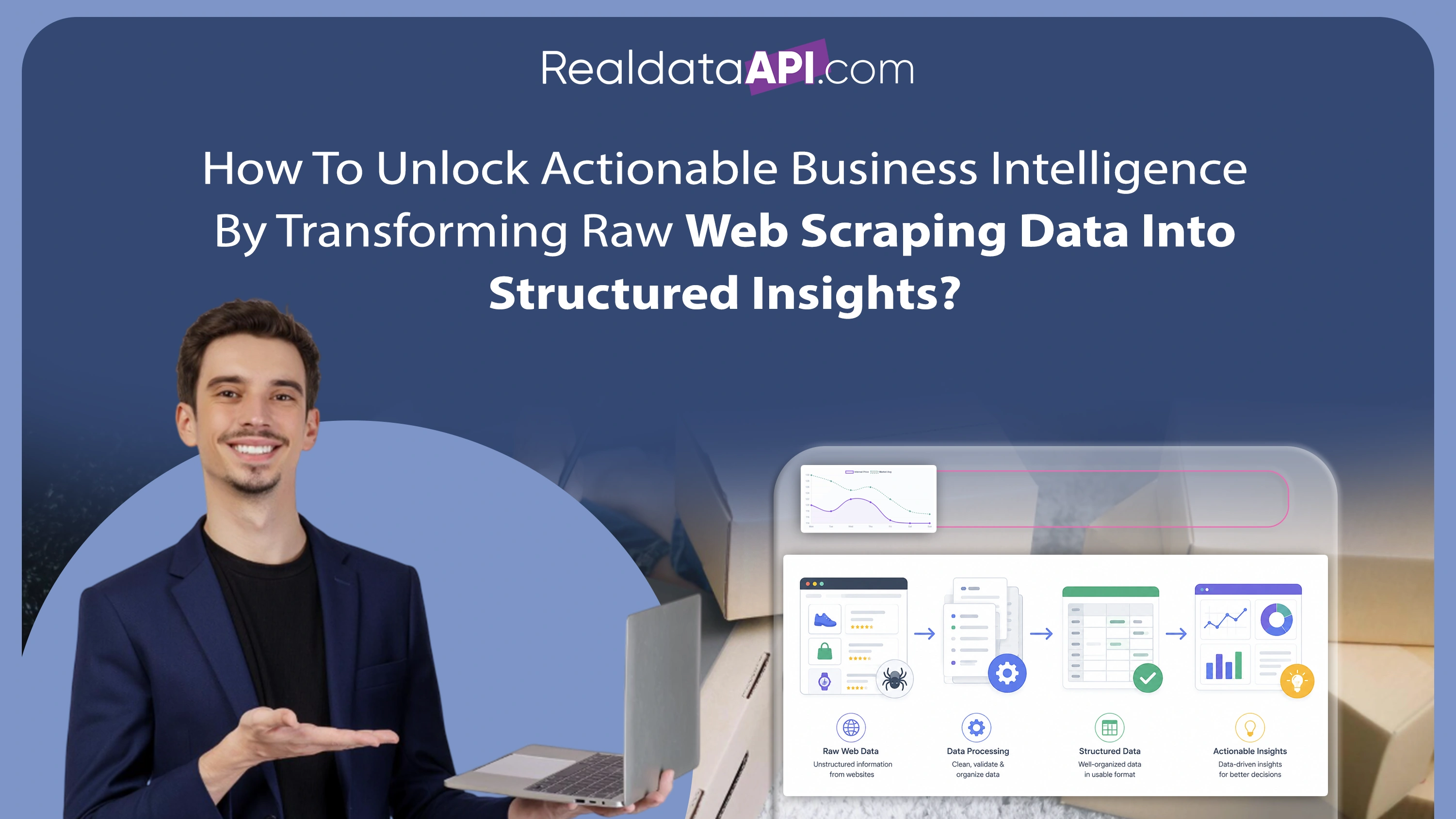

Modern businesses require integrated systems capable of extracting, processing, and distributing data automatically across departments and analytics platforms.

| Data Pipeline Metric | 2020 | 2023 | 2026 |

|---|---|---|---|

| Automated Pipeline Usage | 35% | 58% | 84% |

| Average Processing Speed | 6 hrs | 2 hrs | <30 mins |

| Error Reduction | 18% | 35% | 62% |

The implementation of tools for automating data pipelines and scraping tasks enables enterprises to simplify complex workflows and improve reporting efficiency.

Automated pipelines provide:

- Continuous data ingestion

- Structured transformation processes

- Real-time dashboard updates

- Faster business intelligence reporting

- Reduced operational overhead

Organizations integrating automated pipelines into reporting ecosystems reported up to 50% higher data accuracy and significant improvements in decision-making speed between 2020 and 2026.

Managing High-Volume Recurring Workloads

Large enterprises often need to execute recurring extraction tasks across thousands of websites, marketplaces, and online platforms daily. Efficient workload management is essential for maintaining data consistency and operational reliability.

The ability to manage recurring scraping jobs efficiently has become a critical factor for businesses operating real-time reporting systems.

| Operational Challenge | Reduction Achieved Through Automation |

|---|---|

| Reporting Delays | 55% |

| Manual Intervention | 70% |

| Data Inconsistency | 48% |

| Downtime Events | 35% |

Recurring automation frameworks help businesses:

- Schedule hourly or daily extractions

- Monitor job execution status

- Automatically retry failed tasks

- Optimize resource utilization

Between 2020 and 2026, enterprises implementing recurring workload automation reduced operational costs by nearly 38% while improving reporting reliability substantially.

Coordinating Complex Multi-Stage Workflows

As enterprise data ecosystems become more sophisticated, businesses require advanced orchestration systems capable of coordinating multiple extraction, transformation, and delivery stages simultaneously.

The rise of workflow orchestration for large-scale scraping projects has transformed how organizations manage enterprise automation infrastructure.

| Workflow Capability | 2020 | 2026 |

|---|---|---|

| Multi-Stage Automation | 28% | 82% |

| Real-Time Workflow Monitoring | 22% | 78% |

| AI-Based Task Optimization | 12% | 64% |

Workflow orchestration tools help enterprises:

- Coordinate distributed scraping nodes

- Manage dependencies between jobs

- Automate ETL processing stages

- Monitor infrastructure performance in real time

Organizations leveraging orchestrated workflows achieved up to 60% faster project execution and significantly improved operational transparency.

Expanding Business Efficiency Through Managed Automation

Many enterprises now prefer managed data automation providers instead of maintaining internal scraping infrastructure. Outsourcing automation operations allows organizations to focus on analytics and strategic decision-making rather than infrastructure management.

The demand for Web Scraping Services has grown rapidly across industries requiring scalable and compliant data extraction systems.

| Year | Global Managed Scraping Services Market |

|---|---|

| 2020 | $600M |

| 2022 | $950M |

| 2024 | $1.5B |

| 2026 | $2.4B |

Managed services offer several advantages:

- Enterprise-grade infrastructure

- Automated maintenance and scaling

- Real-time API integrations

- Compliance-focused extraction systems

- High-availability cloud environments

Businesses outsourcing automation services reported up to 42% lower operational complexity and improved reporting efficiency between 2020 and 2026.

Intelligent Crawling for Enterprise Reporting Systems

Large-scale reporting operations require advanced crawling systems capable of collecting structured insights from dynamic websites and digital platforms continuously.

Enterprise Web Crawling technologies powered by AI and distributed infrastructure have become essential for modern reporting ecosystems.

| Capability | 2020 | 2026 |

|---|---|---|

| Real-Time Data Collection | 25% | 88% |

| Dynamic Content Extraction | 18% | 79% |

| AI-Based URL Discovery | 15% | 73% |

Enterprise crawling systems enable businesses to:

- Monitor competitor activities continuously

- Track product and pricing updates

- Analyze market trends in real time

- Collect structured datasets automatically

Between 2020 and 2026, enterprises implementing intelligent crawling systems improved market reporting accuracy by more than 50%.

Why Choose Real Data API?

Modern enterprises require intelligent automation systems capable of delivering reliable and scalable real-time data extraction.

Web Scraping Datasets from Real Data API provide businesses with structured, analytics-ready information that supports automated reporting and strategic decision-making.

With expertise in helping organizations schedule and automate data extraction jobs, Real Data API delivers enterprise-grade solutions designed for performance, scalability, and operational efficiency.

Key capabilities include:

- Automated scraping job scheduling

- Distributed cloud-based extraction systems

- Real-time API integrations

- Workflow orchestration and monitoring

- AI-powered data processing pipelines

- Enterprise-scale crawling infrastructure

Real Data API helps businesses reduce manual workload, eliminate reporting delays, and improve operational intelligence through advanced automation technologies.

Conclusion

The increasing demand for real-time insights is driving enterprises toward intelligent automation systems that eliminate reporting delays and improve business agility. Organizations adopting modern extraction frameworks gain significant advantages in scalability, operational efficiency, and analytics performance.

By choosing to schedule and automate data extraction jobs, businesses can streamline reporting workflows, reduce manual intervention, and ensure continuous access to accurate market intelligence.

From intelligent scheduling systems to enterprise crawling infrastructure and workflow orchestration platforms, automation technologies are reshaping how organizations manage large-scale data operations. Businesses leveraging these capabilities achieve faster reporting cycles, lower operational costs, and stronger competitive positioning.

Real Data API empowers enterprises with scalable automation infrastructure, real-time extraction systems, and intelligent reporting solutions tailored for modern business environments.

Connect with Real Data API today to automate your data extraction workflows and transform enterprise reporting with scalable real-time intelligence solutions!