Introduction

The United States housing market is one of the most data-rich, yet data-fragmented, markets in the world. Web scraping housing market trends across United States has become an essential practice for analysts and investors who need to make sense of the millions of property listings, rental prices, neighborhood statistics, and historical transaction records published online every day — scattered across dozens of platforms, county assessor websites, Multiple Listing Services (MLS), and rental portals. For anyone who wants to truly understand this market, the challenge is not a lack of data. It is the ability to collect, normalize, and analyze it at scale.

This is exactly where web scraping becomes a powerful asset. Whether you are an investor scouting undervalued markets, a fintech startup building a valuation model, or a researcher studying affordability trends, the ability to extract housing market data in the United States programmatically gives you a decisive analytical edge. This article explores the methods, tools, sources, and best practices behind effective USA housing market data collection using web scraping and APIs.

142M+

Housing units in the US

$4.7T

Annual real estate transactions

50+

Major listing platforms scraped

10K+

US cities with rental data online

Why the US Housing Market Demands Better Data

The American housing market is notoriously localized. A city like Austin, Texas, can see median home prices surge 40% over two years while a market like Cleveland, Ohio, moves slowly and steadily. National averages mask these hyper-local dynamics almost entirely. To make informed decisions — whether buying, renting, developing, or investing — granular, city-level, and even ZIP-code-level data is essential.

Traditional data sources such as the US Census Bureau, the Federal Housing Finance Agency (FHFA), or the National Association of Realtors provide periodic reports. These are valuable but often released with lags of weeks or months. Web scraping bridges this gap: it enables real-time or near real-time extraction of property listings, rental prices, days on market, price reductions, and dozens of other signals directly from the platforms where they are published.

"The ability to scrape property listings and pricing data in the USA in near real time has become as fundamental to real estate analysis as a financial terminal is to equity research."

Key Data Sources for Real Estate Scraping

Before building any scraper or pipeline, it is important to understand which sources are most valuable and what data they expose.

1. Major Listing Portals

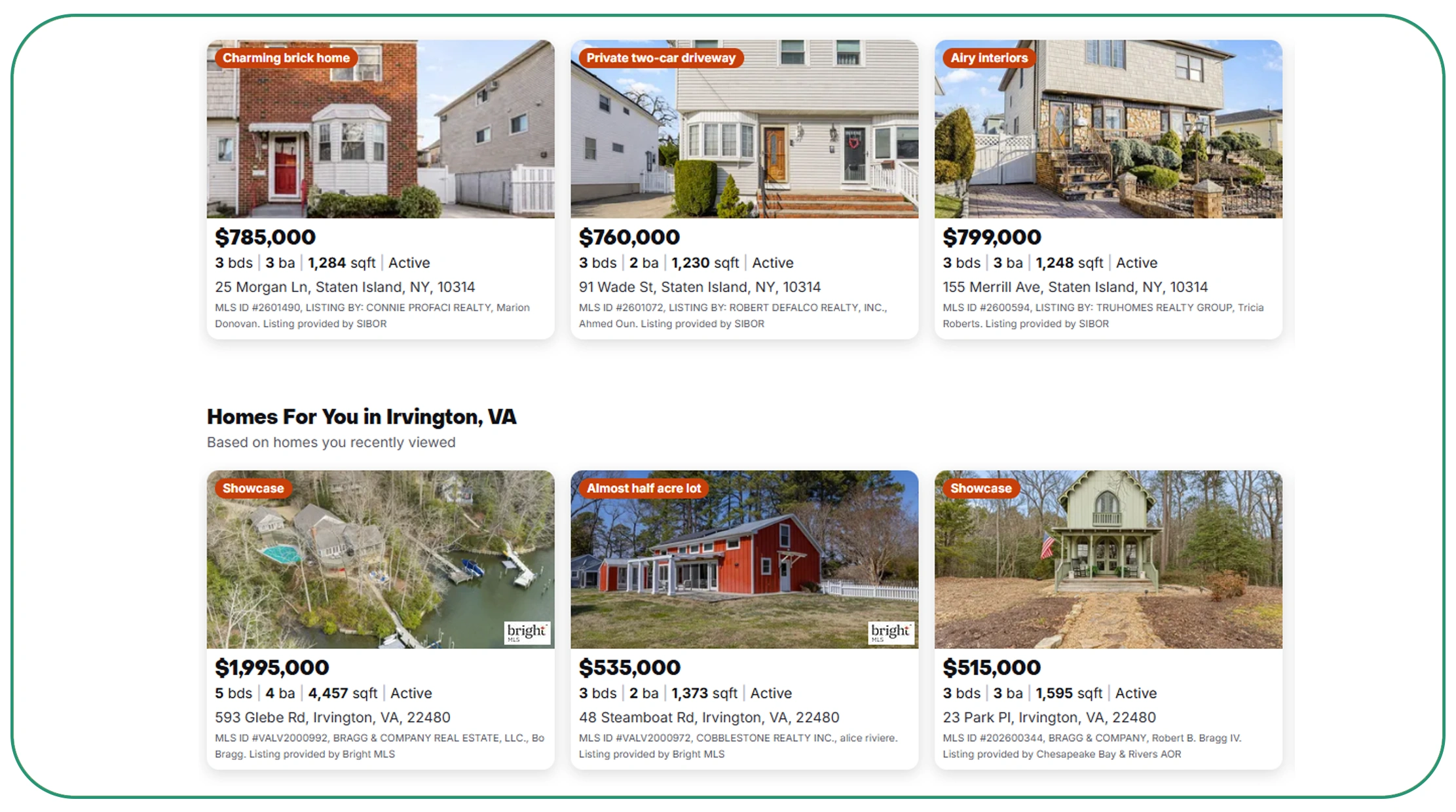

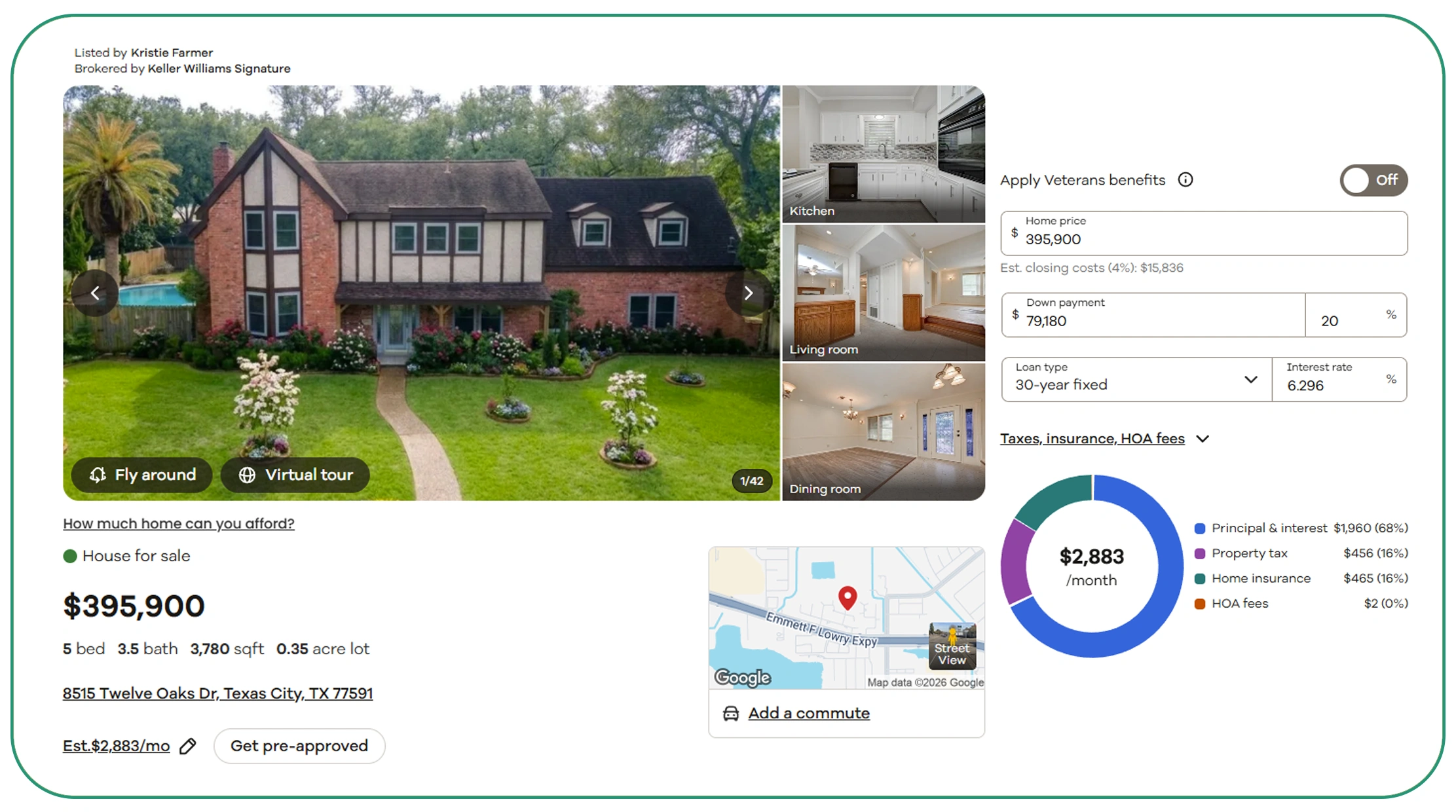

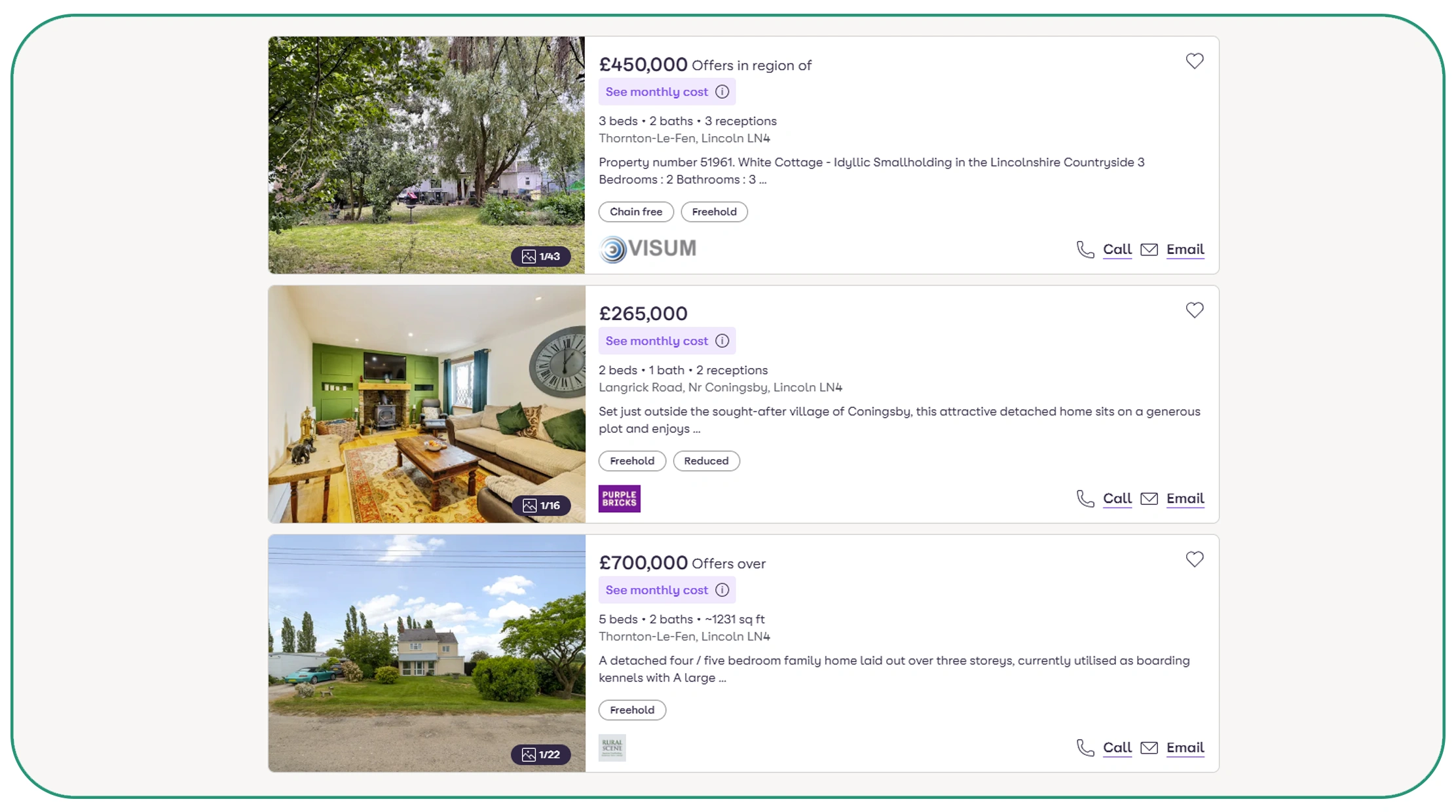

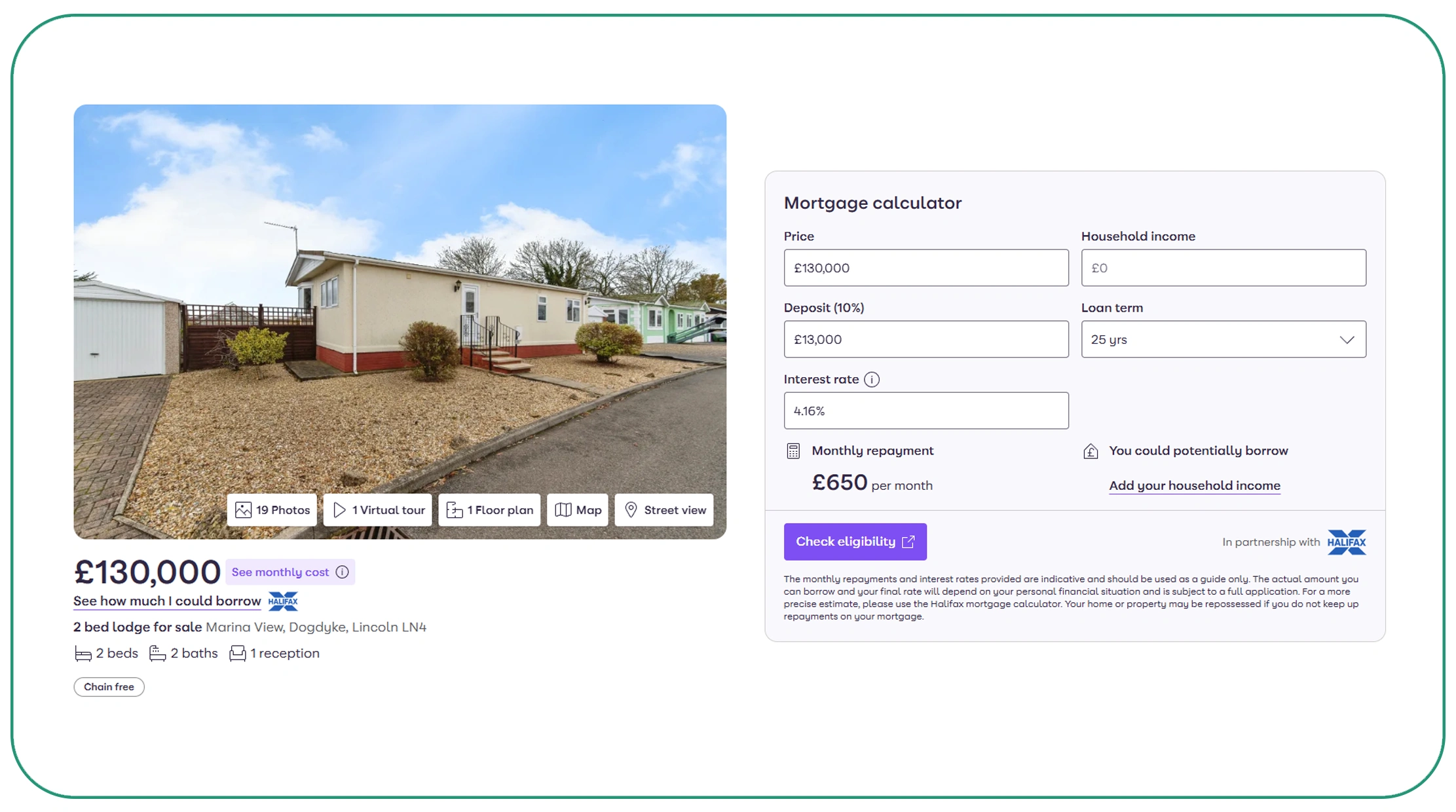

Platforms like Zillow, Realtor.com, Redfin, Trulia, and Homes.com collectively publish millions of active property listings across the United States. Each listing typically includes the asking price, square footage, number of bedrooms and bathrooms, lot size, days on market, listing status, school district ratings, and neighborhood demographic data. These platforms are the primary targets when the goal is to scrape property listings and pricing data in the USA for buy-side or sell-side analysis.

2. Rental Platforms

Apartment List, Apartments.com, Rent.com, Craigslist, and Zumper are among the leading sources for rental market intelligence. A city-wise rental price API scraping strategy across these platforms allows analysts to track average rent per square foot, vacancy trends, concessions, and lease term patterns by city, neighborhood, or ZIP code. This data is invaluable for landlords, multifamily developers, and urban planners.

3. County Assessor and Tax Records

Public government databases — including county tax assessors, recorder offices, and court records — contain ownership history, assessed valuations, transfer prices, and lien data. Though less visually polished than consumer portals, these databases are critical for transaction-level analysis and building a comprehensive real estate dataset with verified historical records.

4. Short-Term Rental Platforms

Airbnb and Vrbo data provides a different but equally important lens: short-term rental yields, occupancy rates, and nightly price fluctuations can signal the desirability and investment potential of specific neighborhoods. Third-party tools like AirDNA aggregate and resell this data, but direct scraping can capture real-time listing details not available through commercial datasets.

Technical Approaches to Scrape Property Listings and Pricing Data

There is no single approach to web scraping real estate data. The right method depends on the target site's architecture, the volume of data required, and the frequency of collection. The most common strategies include:

- Static HTML scraping using Python libraries like BeautifulSoup and Requests works well for pages that render their content server-side. Many county assessor portals and older listing aggregators fall into this category.

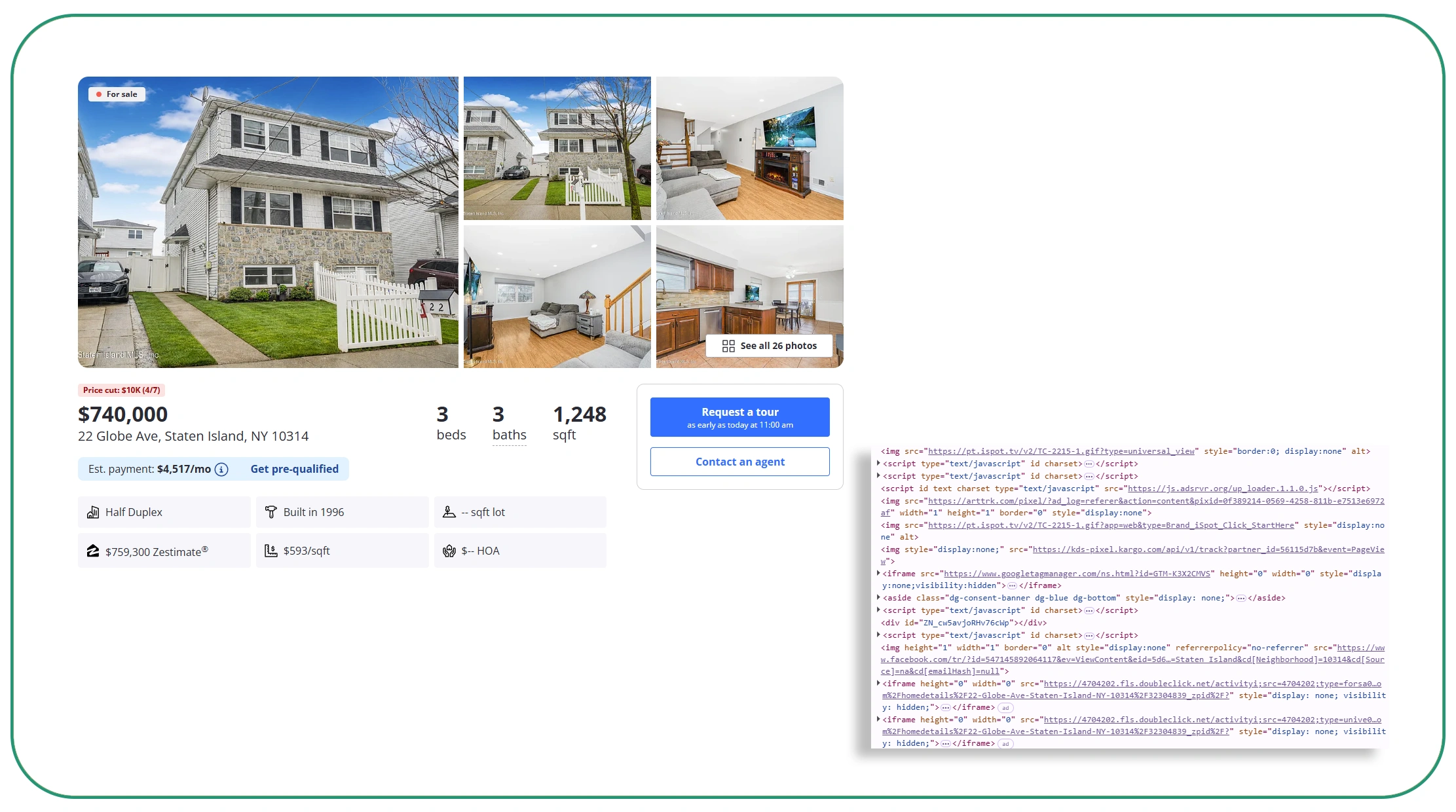

- JavaScript-rendered scraping using Selenium, Playwright, or Puppeteer is necessary for modern single-page applications (SPAs) like Zillow and Redfin, which load listing data dynamically via internal APIs after the initial page load.

- API interception — often called "stealth API scraping" — involves inspecting browser network calls to identify the underlying JSON API endpoints that power a website's UI and then querying those endpoints directly. This approach is faster and more structured than parsing raw HTML.

- Residential proxy rotation ensures that scraping activity is distributed across many IP addresses, reducing the risk of rate-limiting or IP bans during large-scale collection.

# Example: Scraping rental listings with Python + Playwright

import asyncio

from playwright.async_api import async_playwright

async def scrape_rentals(city_slug):

async with async_playwright() as p:

browser = await

p.chromium.launch(headless=True)

page = await browser.new_page()

await

page.goto(f"https://example-rental-site.com/{city_slug}")

listings = await

page.query_selector_all(".listing-card")

data = []

for item in listings:

price = await

item.query_selector(".price")

address = await

item.query_selector(".address")

data.append({

"price":

await price.inner_text() if price else None,

"address":

await address.inner_text() if address else None

})

await browser.close()

return dataReal Estate APIs for Web Scraping

As demand for property data has grown, a thriving ecosystem of Real Estate APIs for web scraping has emerged. These APIs serve as intermediaries: they either aggregate data from multiple platforms or expose structured access to a specific source's data, significantly reducing the engineering overhead of direct scraping.

Popular Real Estate APIs in the US Market

- Zillow Bridge API — provides access to Zillow's Zestimate valuations and listing data for authorized partners.

- Rentcast API — delivers rental price estimates, market comparables, and occupancy data by ZIP code or city.

- AttomData API — aggregates property details, transactions, valuations, tax records, and neighborhood data from thousands of US counties.

- RESO Web API — the National Association of Realtors' standardized API for MLS data access, used by real estate professionals and proptech companies.

- RapidAPI Real Estate endpoints — a marketplace of third-party scraping and aggregation APIs for Zillow, Realtor.com, and others.

When using Real Estate APIs for web scraping workflows, data engineers typically combine structured API responses with supplementary scraped data to fill coverage gaps and enrich records with additional signals not available through official channels.

Building a USA Housing Market Data Collection Pipeline

A production-grade USA housing market data collection system is rarely a single script. It is a pipeline — a sequence of stages that ingests raw data, validates it, transforms it into a consistent schema, stores it, and makes it queryable for downstream analysis.

A typical architecture for real estate data scraping for market analysis in the USA includes these components:

- Scheduler: A job scheduler (like Apache Airflow or cron-based triggers) that runs scraping tasks on a defined cadence — daily for price updates, weekly for new listing inventories, monthly for tax record refreshes.

- Scraper layer: Individual scrapers or API clients configured per source, handling authentication, pagination, and rate limiting.

- Data validation: Schema enforcement and anomaly detection to flag missing fields, outlier prices, or duplicate records before they enter the database.

- Storage: A relational or columnar database (PostgreSQL, Snowflake, BigQuery) for structured data, with a data lake (S3 or GCS) for raw HTML snapshots and archives.

- Analytics layer: BI tools like Tableau, Metabase, or Looker, or programmatic analysis in Python (Pandas, GeoPandas for spatial analysis), connected to the structured database for reporting.

City-Wise Rental Price Analysis Through API Scraping

One of the highest-value applications of web scraping real estate data API techniques is city-wise rental price tracking. By querying rental APIs and scraping rental platforms across dozens of US metros simultaneously, analysts can generate a real-time view of rent dynamics that no single report or dataset can match.

For example, combining city-wise rental price API scraping from Apartments.com, Zumper, and Rentcast across markets like New York, Los Angeles, Chicago, Miami, Denver, and Austin allows a researcher to identify:

- Which markets are experiencing the fastest rent growth quarter-over-quarter

- How rental concessions (free months, reduced deposits) correlate with rising vacancy rates

- How short-term rental pricing on Airbnb diverges from or converges with traditional 12-month lease prices in the same neighborhood

- How supply pipelines (new apartment completions) are suppressing or elevating rents in specific submarkets

This kind of cross-market, multi-source rental intelligence is precisely what institutional investors, hedge funds, and real estate private equity firms require — and web scraping is often the only practical way to assemble it at the required level of granularity and freshness.

Legal and Ethical Considerations

No discussion of web scraping for real estate data is complete without addressing the legal and ethical landscape. In the United States, the legality of web scraping has been significantly shaped by the Ninth Circuit's ruling in hiQ Labs v. LinkedIn, which affirmed that scraping publicly accessible data does not violate the Computer Fraud and Abuse Act (CFAA). However, this does not mean scraping is without boundaries.

Responsible practitioners follow these core principles: respect robots.txt directives as a starting point (though they are not legally binding), avoid scraping data behind authentication walls without explicit permission, never scrape personally identifiable information (PII) in violation of privacy laws, honor reasonable rate limits to avoid service disruption, and review each platform's Terms of Service before commencing any data collection program.

Using a professional real estate dataset provider or an official Real Estate API for web scraping is often the most compliant and sustainable path for production use cases at scale.

Conclusion: The Future of Real Estate Data Intelligence

The ability to extract housing market data in the United States at scale is no longer a niche capability for quant funds and proptech startups — it is becoming a baseline requirement for any serious real estate analyst, investor, or researcher. The combination of web scraping techniques, API integration, and structured data pipelines makes it possible to monitor thousands of markets simultaneously, track price trends as they emerge, and build proprietary real estate datasets that offer genuine competitive advantage.

As the real estate data landscape matures, purpose-built API providers are increasingly the preferred solution for teams that want comprehensive, clean, and compliant data without building scraping infrastructure from scratch. Among the most capable platforms available today is Real Data API — a dedicated real estate data service that provides structured access to property listings, rental prices, historical transaction records, and city-level market metrics across the United States. Its endpoints are purpose-built for the kind of systematic, high-volume data collection that powers serious market analysis, making it an excellent foundation for any web scraping real estate data API workflow.

Real Data API — Built for Real Estate Intelligence

Real Data API offers comprehensive endpoints for property listings, city-wise rental prices, historical sales, and market trends across US cities and ZIP codes. Ideal for investors, analysts, and developers building data-driven real estate applications, valuation models, or market research tools.

Whether you are scraping property listings and pricing data in the USA manually, integrating multiple third-party APIs, or migrating to a managed real estate dataset service like Real Data API, the core principle remains the same: the analyst with the best, freshest, and most granular data wins. In the US housing market, that advantage begins with knowing exactly how to collect, structure, and query the data that everyone else is looking at — but failing to fully use.

This article explores data collection methodologies for informational and analytical purposes. Always review the Terms of Service of any platform before scraping and consult legal counsel for compliance guidance.