Introduction

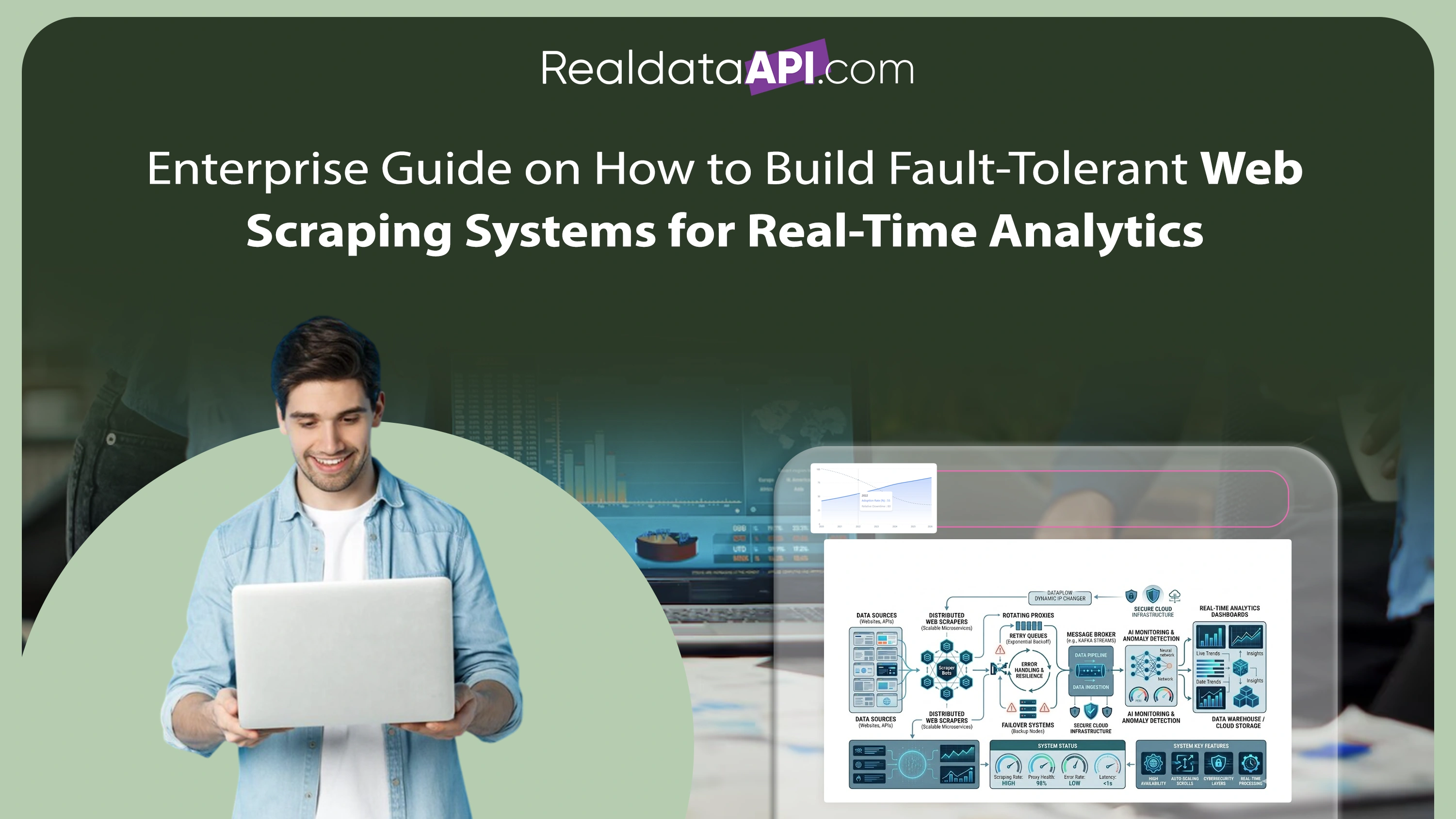

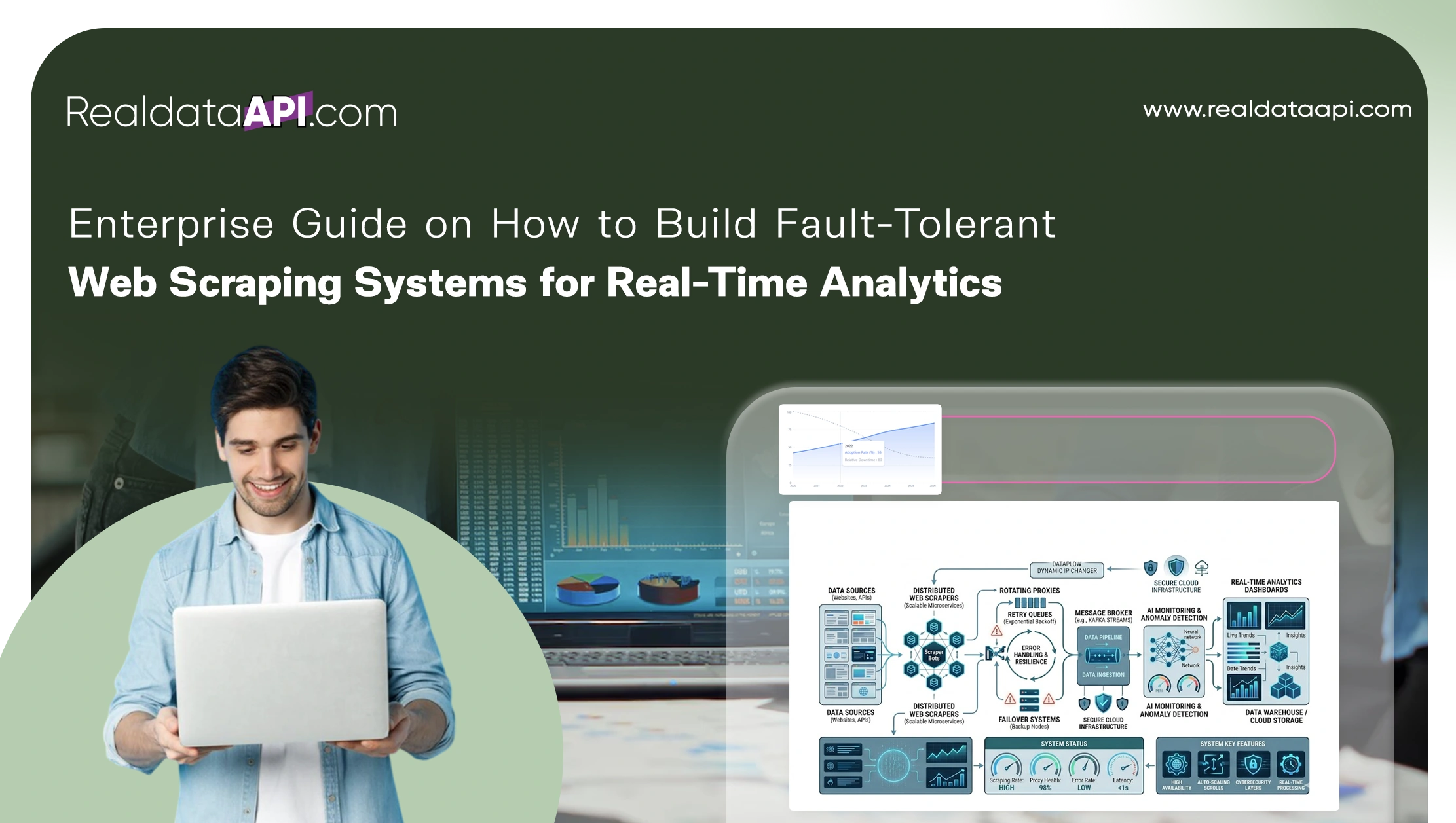

Modern enterprises depend heavily on real-time data to drive competitive intelligence, pricing optimization, market analysis, and operational decision-making. However, large-scale web scraping systems often face challenges such as website downtime, CAPTCHA restrictions, IP bans, server failures, dynamic content rendering, and unstable network conditions. These challenges make reliability and fault tolerance critical components of enterprise-grade data extraction systems.

Organizations seeking long-term scalability are increasingly learning how to build fault-tolerant web scraping systems capable of maintaining uninterrupted operations even during failures and infrastructure disruptions. At the same time, the evolution of the Web Scraping API has enabled enterprises to automate resilient extraction workflows through distributed cloud infrastructure, intelligent retry systems, and real-time monitoring capabilities.

Between 2020 and 2026, enterprise adoption of fault-tolerant data systems increased significantly, with more than 80% of large organizations integrating automated recovery and monitoring frameworks into their scraping operations. Businesses implementing resilient architectures reported up to 65% reduction in scraping downtime, 50% improvement in extraction consistency, and substantial improvements in analytics reliability.

This guide explores the technologies, methodologies, and infrastructure strategies enterprises use to design scalable, fault-tolerant scraping systems that support real-time analytics across industries.

Creating Stable Foundations for Large-Scale Extraction

Enterprise web scraping systems require stable and scalable data pipelines capable of processing millions of requests efficiently without service interruptions. Traditional monolithic scraping scripts are often unable to handle infrastructure failures or sudden traffic spikes.

| Year | Enterprises Using Automated Pipelines | Downtime Reduction Achieved |

|---|---|---|

| 2020 | 36% | 18% |

| 2022 | 52% | 32% |

| 2024 | 69% | 48% |

| 2026 | 84% | 65% |

Organizations aiming to build reliable data pipelines for web scraping projects increasingly adopt cloud-native architectures using distributed services such as Kubernetes, Apache Kafka, AWS Lambda, and Google Cloud Pub/Sub.

Reliable data pipelines support:

- Automated task distribution

- Real-time job monitoring

- Scalable request processing

- Data validation workflows

- High-availability infrastructure

Between 2020 and 2026, enterprises using resilient pipeline architectures improved analytics processing speed by nearly 45% while significantly reducing infrastructure failures.

Strengthening System Recovery and Error Handling

Failures are inevitable in large-scale scraping operations. Websites frequently change layouts, introduce anti-bot mechanisms, or experience temporary outages. Effective recovery systems are essential for maintaining uninterrupted extraction workflows.

| Recovery Capability | 2020 | 2026 |

|---|---|---|

| Automated Retries | 42% | 92% |

| Intelligent Failover Systems | 18% | 74% |

| AI-Based Error Detection | 12% | 68% |

The ability to implement retry and fallback mechanisms in scraping has become a core component of enterprise resilience strategies.

Modern fault-tolerant systems include:

- Exponential backoff retry logic

- Alternative proxy routing

- Automated scraper switching

- Intelligent request throttling

- Backup extraction workflows

Businesses using advanced retry systems reduced failed extraction attempts by more than 55% between 2020 and 2026 while improving data reliability and uptime.

Managing Failures Across Distributed Architectures

Enterprise scraping operations often run across multiple cloud regions and distributed infrastructure environments. While distributed systems improve scalability, they also introduce additional complexity related to synchronization, monitoring, and failure management.

The challenge of handling failures in distributed scraping systems requires advanced orchestration frameworks capable of detecting and isolating infrastructure issues automatically.

| Distributed System Metric | 2020 | 2023 | 2026 |

|---|---|---|---|

| Multi-Region Deployments | 22% | 48% | 79% |

| Real-Time Failure Detection | 18% | 44% | 82% |

| Automated Recovery Workflows | 14% | 39% | 76% |

Modern distributed scraping systems use:

- Kubernetes orchestration

- Centralized monitoring dashboards

- Auto-healing infrastructure

- Distributed message queues

- Redundant processing nodes

Organizations implementing distributed recovery systems improved operational continuity by nearly 60% and reduced infrastructure-related downtime substantially.

Expanding Automation Through Managed Infrastructure

As scraping systems become increasingly complex, many enterprises choose managed service providers instead of maintaining fault-tolerant infrastructure internally.

The demand for Web Scraping Services has increased sharply due to the need for resilient, scalable, and continuously maintained extraction environments.

| Year | Global Managed Scraping Services Market |

|---|---|

| 2020 | $650M |

| 2022 | $1.0B |

| 2024 | $1.7B |

| 2026 | $2.8B |

Managed service providers offer:

- Cloud-based scraping infrastructure

- Proxy and CAPTCHA management

- Real-time system monitoring

- Distributed failover architecture

- Automatic infrastructure scaling

Enterprises outsourcing scraping operations reduced infrastructure maintenance overhead by up to 42% while improving overall data extraction reliability.

Building Scalable Crawling Ecosystems for Analytics

Large-scale analytics operations require intelligent crawling systems capable of continuously monitoring websites, marketplaces, and digital platforms without interruptions.

Enterprise Web Crawling systems powered by distributed cloud infrastructure enable organizations to process massive volumes of data while maintaining high availability.

| Crawling Capability | 2020 | 2026 |

|---|---|---|

| Concurrent Crawl Capacity | 8,000 pages/hr | 150,000 pages/hr |

| Dynamic Content Rendering | 28% | 85% |

| Real-Time Analytics Integration | 22% | 88% |

Enterprise crawling frameworks support:

- Distributed crawling nodes

- Dynamic rendering environments

- AI-based URL prioritization

- Continuous monitoring workflows

- Automated scaling systems

Between 2020 and 2026, businesses using advanced crawling ecosystems improved reporting speed by nearly 53% and enhanced competitive intelligence capabilities significantly.

Structuring Data for Reliable Business Intelligence

Fault-tolerant scraping systems must deliver structured, validated, and analytics-ready outputs despite operational failures or inconsistent source data.

The increasing use of structured Web Scraping Datasets enables organizations to integrate scraped data directly into BI dashboards, AI engines, and reporting systems.

| Dataset Capability | 2020 | 2026 |

|---|---|---|

| Automated Data Validation | 32% | 84% |

| Real-Time Dataset Delivery | 24% | 81% |

| AI-Ready Structured Outputs | 18% | 76% |

Modern dataset management systems support:

- Duplicate elimination

- Schema validation

- Metadata enrichment

- Automated anomaly detection

- Real-time analytics synchronization

Businesses leveraging structured datasets improved reporting accuracy by over 48% while significantly reducing manual data correction efforts.

Why Choose Real Data API?

Modern enterprises require intelligent, resilient, and scalable systems capable of supporting uninterrupted real-time analytics operations.

Real Data API helps organizations understand how to build fault-tolerant web scraping systems through enterprise-grade infrastructure, automated recovery systems, and distributed cloud-native architectures.

Key capabilities include:

- High-availability scraping infrastructure

- Intelligent retry and failover systems

- Distributed crawling environments

- Real-time monitoring dashboards

- AI-powered extraction workflows

- Structured analytics-ready data delivery

Real Data API empowers enterprises to reduce downtime, improve extraction consistency, and scale real-time analytics operations with confidence.

Conclusion

The future of enterprise analytics depends heavily on resilient data extraction infrastructure capable of operating continuously despite failures, traffic spikes, or changing website architectures. Organizations adopting fault-tolerant scraping systems gain significant advantages in scalability, operational stability, and reporting accuracy.

By learning how to build fault-tolerant web scraping systems, enterprises can ensure uninterrupted access to real-time intelligence while minimizing downtime and infrastructure risks.

From automated recovery frameworks to distributed crawling ecosystems and AI-powered monitoring systems, modern fault-tolerant architectures are transforming enterprise web scraping operations across industries. Businesses implementing these technologies achieve faster analytics delivery, improved data reliability, and stronger competitive positioning.