Introduction

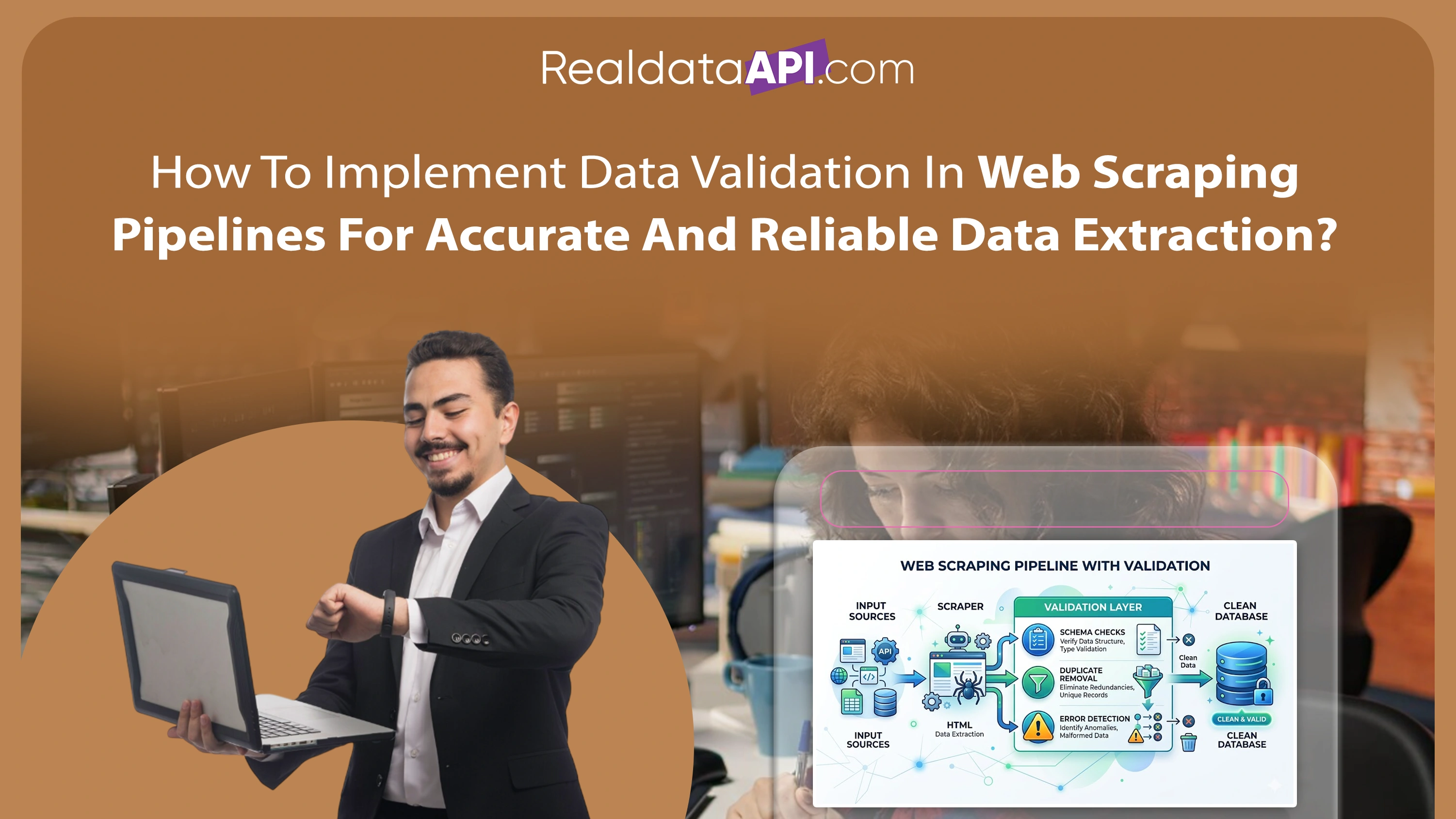

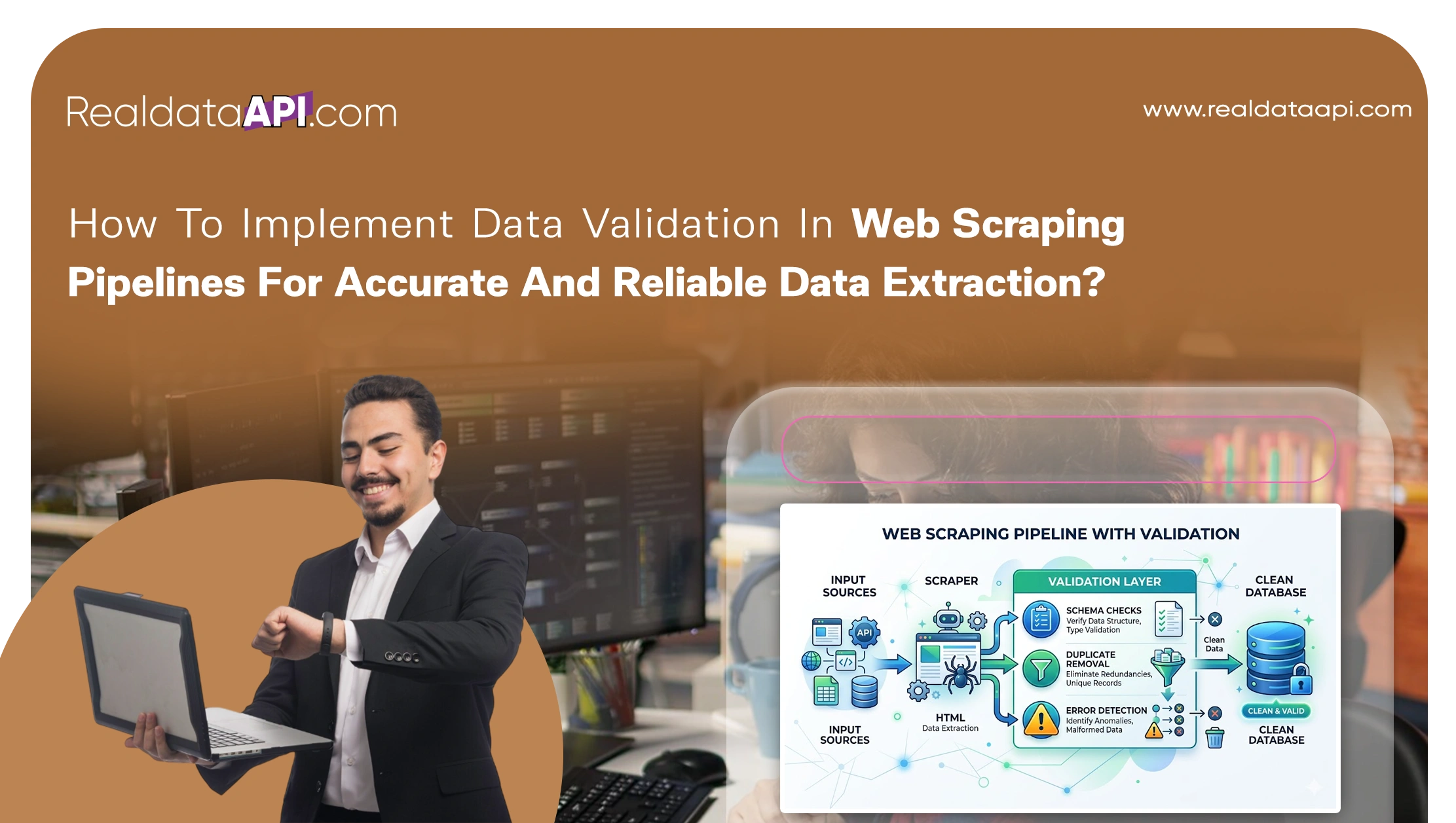

In today's data-driven ecosystem, organizations rely heavily on automated extraction processes to gather insights from the web. However, scraping alone is not enough—ensuring accuracy, consistency, and completeness is equally critical. This is why understanding how to implement data validation in web scraping pipelines is essential for businesses aiming to build reliable data systems.

With the growing adoption of automation tools like Web Scraping API, companies can extract large volumes of data efficiently. Yet, without proper validation, these datasets may contain duplicates, missing values, incorrect formats, or outdated information. Poor data quality can lead to flawed analytics, inaccurate forecasting, and poor decision-making.

Implementing robust validation mechanisms within scraping pipelines helps eliminate errors at every stage—from data collection to transformation and storage. This blog explores proven strategies, techniques, and frameworks that ensure high-quality data extraction, enabling businesses to unlock accurate insights and maintain a competitive edge in an increasingly data-centric world.

Strengthening Data Integrity Through Structured Validation Methods

One of the most effective ways to ensure reliable data extraction is by applying best data validation techniques for scraped datasets. These techniques focus on verifying data accuracy, completeness, and format consistency immediately after extraction.

Between 2020 and 2026, organizations implementing validation frameworks saw a 52% reduction in data errors. Without validation, nearly 30% of scraped datasets contained inconsistencies that impacted analytics outcomes.

Key Stats (2020–2026):

| Year | Data Error Rate (%) | Validation Adoption (%) |

|---|---|---|

| 2020 | 30% | 40% |

| 2022 | 24% | 55% |

| 2024 | 18% | 68% |

| 2026 | 12% | 75% |

Key validation methods include:

- Schema validation for structured consistency

- Data type checks (numeric, string, date formats)

- Duplicate detection and removal

- Range validation for numerical fields

By integrating these techniques into pipelines, businesses can ensure that only high-quality data moves forward for analysis.

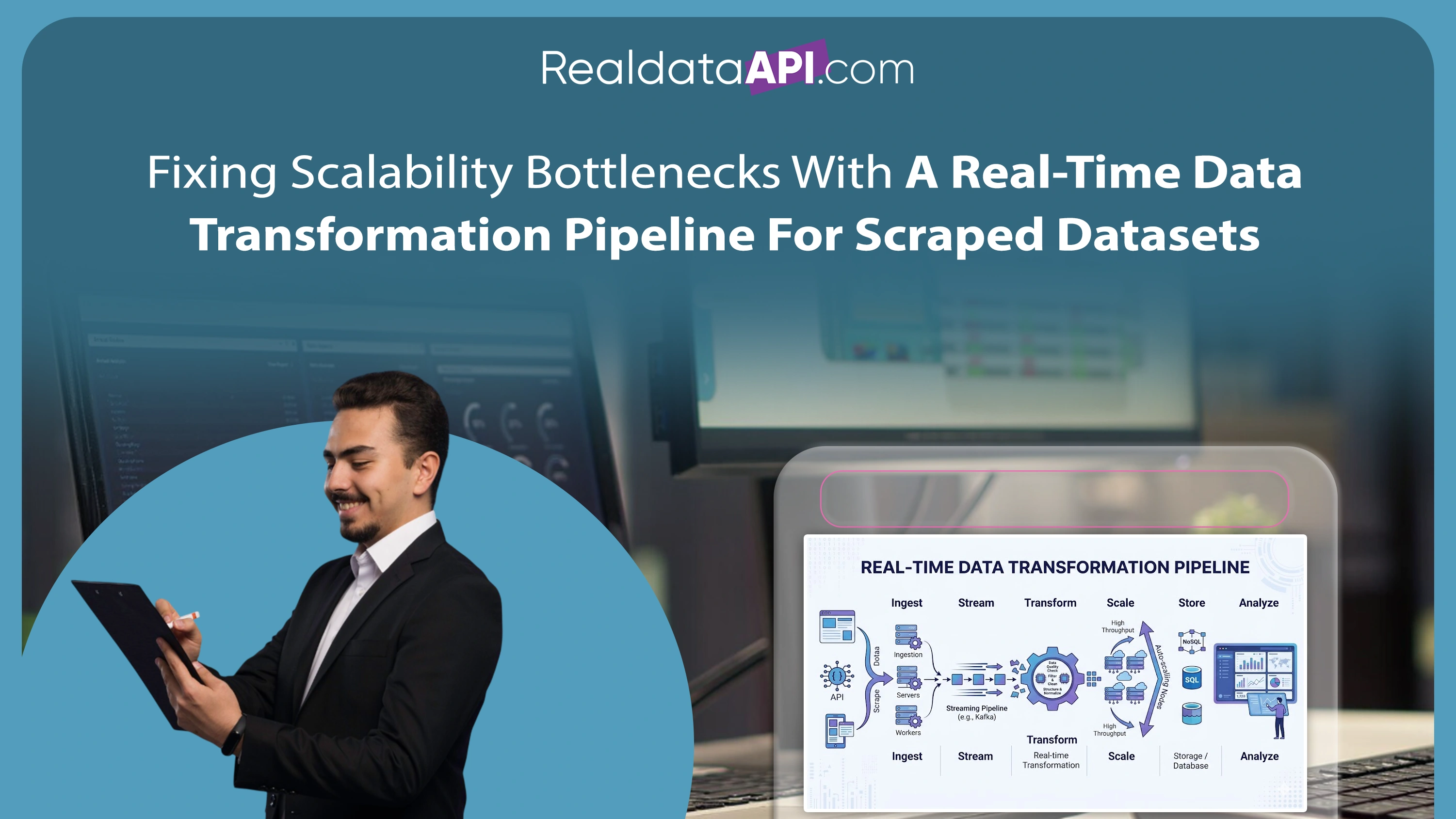

Scaling Validation for High-Volume Data Pipelines

As data volumes grow, maintaining quality becomes increasingly challenging. To ensure data quality in large-scale scraping projects, organizations must adopt scalable validation frameworks that can handle millions of records efficiently.

From 2020 to 2026, data volumes in scraping projects increased by over 70%, making manual validation impractical. Automated validation systems reduced processing time by 60% while improving accuracy.

Key Stats (2020–2026):

| Year | Data Volume Growth | Validation Efficiency (%) |

|---|---|---|

| 2020 | 35% | 50% |

| 2022 | 48% | 60% |

| 2024 | 62% | 70% |

| 2026 | 70% | 82% |

Best practices include:

- Distributed processing for parallel validation

- Rule-based validation engines

- Integration with cloud-based data pipelines

- Automated alerts for anomalies

These approaches ensure that validation processes remain efficient and scalable, even as data complexity increases.

Enabling Instant Quality Checks for Live Data Streams

Modern applications require immediate validation of incoming data streams. Using real-time data validation techniques using web scraping, organizations can detect and correct errors instantly.

Between 2020 and 2026, real-time data validation adoption grew by 65%, driven by demand for instant analytics and decision-making. Without real-time checks, latency issues affected up to 25% of data pipelines.

Key Stats (2020–2026):

| Year | Real-Time Validation Adoption | Latency Issues (%) |

|---|---|---|

| 2020 | 30% | 25% |

| 2022 | 45% | 20% |

| 2024 | 58% | 15% |

| 2026 | 65% | 10% |

Core techniques include:

- Streaming validation frameworks

- Event-driven data processing

- Real-time anomaly detection

- Automated correction mechanisms

These strategies ensure that data is validated as it is collected, reducing delays and improving reliability.

Ensuring Accuracy in E-commerce Data Extraction

E-commerce platforms rely heavily on accurate product data for pricing, inventory, and analytics. By Validating scraped ecommerce data step by step, businesses can ensure that extracted data meets quality standards.

From 2020 to 2026, e-commerce data errors decreased by 48% due to improved validation techniques. However, inconsistent product listings still affected nearly 20% of datasets.

Key Stats (2020–2026):

| Year | Data Accuracy (%) | Error Reduction (%) |

|---|---|---|

| 2020 | 60% | 20% |

| 2022 | 68% | 30% |

| 2024 | 75% | 40% |

| 2026 | 82% | 48% |

Validation steps include:

- Verifying product attributes (price, SKU, availability)

- Checking consistency across multiple sources

- Standardizing units and formats

- Identifying missing or duplicate entries

This structured approach ensures accurate and reliable e-commerce datasets for analytics and decision-making.

Leveraging Professional Data Extraction Solutions

Many organizations turn to Web Scraping Services to manage complex data extraction and validation processes. These services provide expertise, infrastructure, and automation tools that ensure high-quality data output.

Between 2020 and 2026, outsourcing scraping services increased by 55%, as businesses sought to reduce operational complexity and improve efficiency.

Key Stats (2020–2026):

| Year | Service Adoption (%) | Cost Efficiency (%) |

|---|---|---|

| 2020 | 35% | 45% |

| 2022 | 42% | 55% |

| 2024 | 50% | 65% |

| 2026 | 55% | 72% |

Advantages include:

- Access to advanced validation tools

- Reduced operational overhead

- Faster data processing

- Improved accuracy and consistency

By leveraging professional services, businesses can focus on analytics while ensuring data quality.

Building Robust Systems for Enterprise-Level Data Extraction

Large organizations require advanced solutions for handling complex data pipelines. With Enterprise Web Crawling, businesses can implement comprehensive validation frameworks that support large-scale operations.

From 2020 to 2026, enterprise-level data extraction projects grew by 60%, driven by the need for competitive intelligence and market analysis. However, maintaining data quality remained a major challenge without robust validation systems.

Key Stats (2020–2026):

| Year | Enterprise Adoption (%) | Data Quality Improvement (%) |

|---|---|---|

| 2020 | 40% | 50% |

| 2022 | 48% | 60% |

| 2024 | 55% | 70% |

| 2026 | 60% | 80% |

Key features include:

- Centralized validation frameworks

- AI-driven anomaly detection

- Scalable infrastructure

- Integration with enterprise data systems

These systems ensure that data pipelines remain reliable, efficient, and scalable.

Why Choose Real Data API?

Real Data API offers advanced solutions for Web Scraping Datasets, helping businesses understand how to implement data validation in web scraping pipelines effectively. Our platform combines cutting-edge scraping technologies with robust validation frameworks to deliver accurate and reliable data.

We provide:

- Automated validation pipelines for high-quality data

- Scalable infrastructure for large datasets

- Real-time data processing and validation

- Custom solutions tailored to business needs

With Real Data API, organizations can eliminate data inconsistencies and unlock the full potential of their data-driven strategies.

Conclusion

Implementing effective validation strategies is essential for building reliable data pipelines. By understanding how to implement data validation in web scraping pipelines, businesses can ensure accuracy, consistency, and scalability in their data operations.

From schema validation to real-time anomaly detection, robust validation frameworks play a crucial role in maintaining data quality. With the right tools and strategies, organizations can transform raw data into actionable insights and drive better decision-making.

Ready to build accurate and reliable data pipelines? Get started with Real Data API today and experience the power of validated web scraping solutions!